Reasoning about Counterfactuals to Improve Human Inverse Reinforcement Learning

Paper and Code

Apr 07, 2022

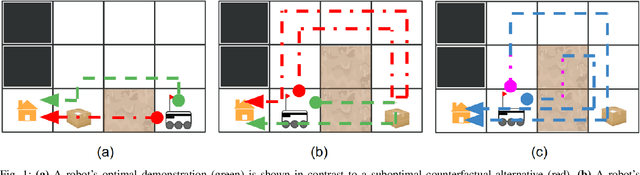

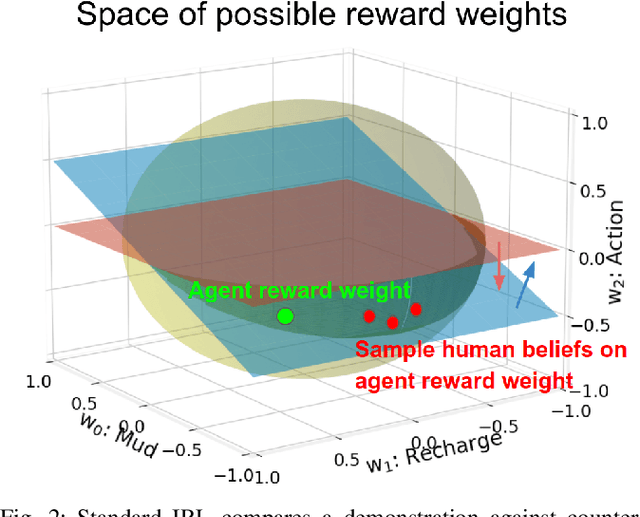

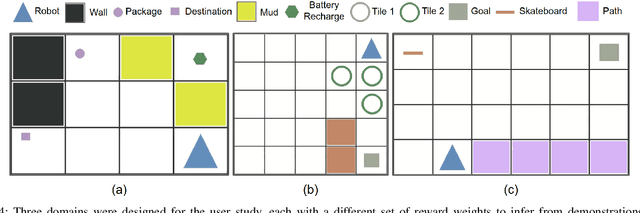

To collaborate well with robots, we must be able to understand their decision making. Humans naturally infer other agents' beliefs and desires by reasoning about their observable behavior in a way that resembles inverse reinforcement learning (IRL). Thus, robots can convey their beliefs and desires by providing demonstrations that are informative for a human's IRL. An informative demonstration is one that differs strongly from the learner's expectations of what the robot will do given their current understanding of the robot's decision making. However, standard IRL does not model the learner's existing expectations, and thus cannot do this counterfactual reasoning. We propose to incorporate the learner's current understanding of the robot's decision making into our model of human IRL, so that our robot can select demonstrations that maximize the human's understanding. We also propose a novel measure for estimating the difficulty for a human to predict instances of a robot's behavior in unseen environments. A user study finds that our test difficulty measure correlates well with human performance and confidence. Interestingly, considering human beliefs and counterfactuals when selecting demonstrations decreases human performance on easy tests, but increases performance on difficult tests, providing insight on how to best utilize such models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge