Realistic Traffic Generation for Web Robots

Paper and Code

Dec 15, 2017

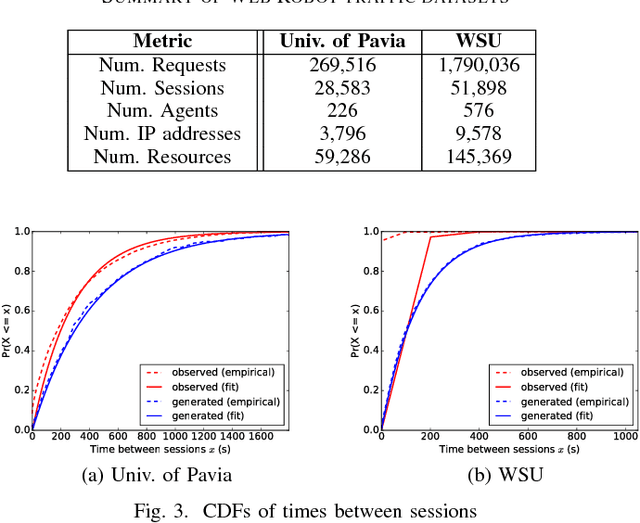

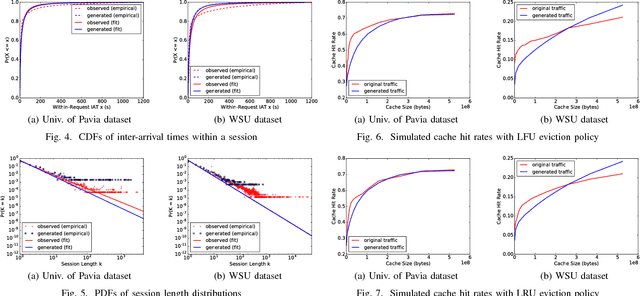

Critical to evaluating the capacity, scalability, and availability of web systems are realistic web traffic generators. Web traffic generation is a classic research problem, no generator accounts for the characteristics of web robots or crawlers that are now the dominant source of traffic to a web server. Administrators are thus unable to test, stress, and evaluate how their systems perform in the face of ever increasing levels of web robot traffic. To resolve this problem, this paper introduces a novel approach to generate synthetic web robot traffic with high fidelity. It generates traffic that accounts for both the temporal and behavioral qualities of robot traffic by statistical and Bayesian models that are fitted to the properties of robot traffic seen in web logs from North America and Europe. We evaluate our traffic generator by comparing the characteristics of generated traffic to those of the original data. We look at session arrival rates, inter-arrival times and session lengths, comparing and contrasting them between generated and real traffic. Finally, we show that our generated traffic affects cache performance similarly to actual traffic, using the common LRU and LFU eviction policies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge