RatBodyFormer: Rodent Body Surface from Keypoints

Paper and Code

Dec 12, 2024

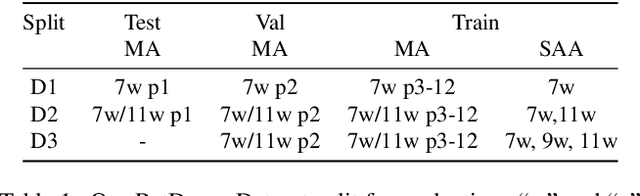

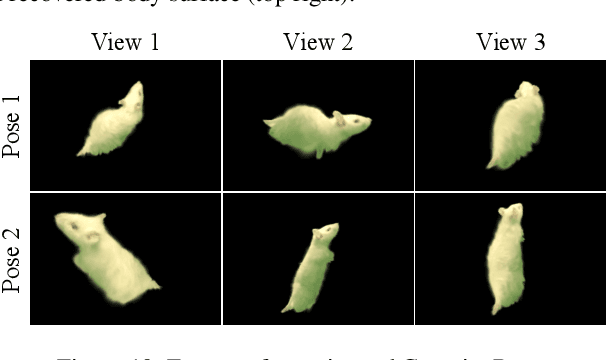

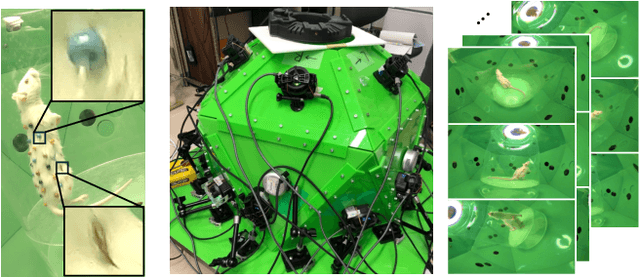

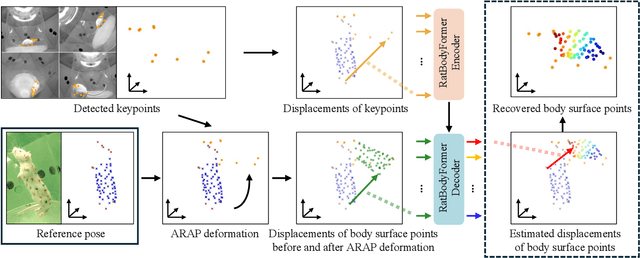

Rat behavior modeling goes to the heart of many scientific studies, yet the textureless body surface evades automatic analysis as it literally has no keypoints that detectors can find. The movement of the body surface, however, is a rich source of information for deciphering the rat behavior. We introduce two key contributions to automatically recover densely 3D sampled rat body surface points, passively. The first is RatDome, a novel multi-camera system for rat behavior capture, and a large-scale dataset captured with it that consists of pairs of 3D keypoints and 3D body surface points. The second is RatBodyFormer, a novel network to transform detected keypoints to 3D body surface points. RatBodyFormer is agnostic to the exact locations of the 3D body surface points in the training data and is trained with masked-learning. We experimentally validate our framework with a number of real-world experiments. Our results collectively serve as a novel foundation for automated rat behavior analysis and will likely have far-reaching implications for biomedical and neuroscientific research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge