Quasi-potential theory for escape problem: Quantitative sharpness effect on SGD's escape from local minima

Paper and Code

Nov 07, 2021

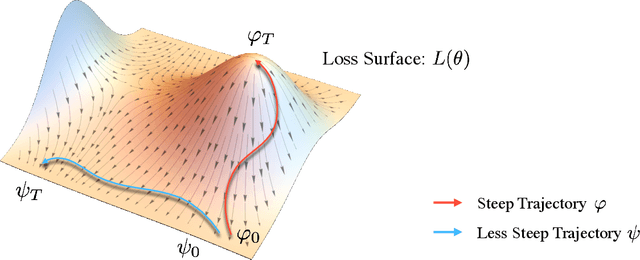

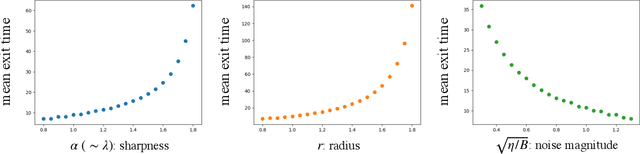

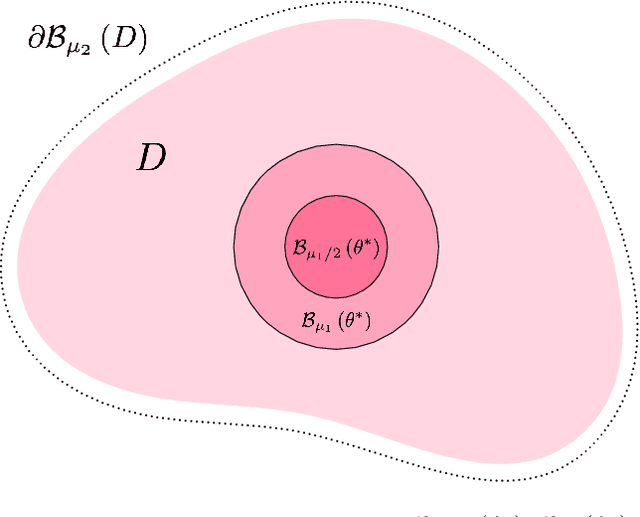

We develop a quantitative theory on an escape problem of a stochastic gradient descent (SGD) algorithm and investigate the effect of sharpness of loss surfaces on the escape. Deep learning has achieved tremendous success in various domains, however, it has opened up various theoretical open questions. One of the typical questions is why an SGD can find parameters that generalize well over non-convex loss surfaces. An escape problem is an approach to tackle this question, which investigates how efficiently an SGD escapes from local minima. In this paper, we develop a quasi-potential theory for the escape problem, by applying a theory of stochastic dynamical systems. We show that the quasi-potential theory can handle both geometric properties of loss surfaces and a covariance structure of gradient noise in a unified manner, while they have been separately studied in previous works. Our theoretical results imply that (i) the sharpness of loss surfaces contributes to the slow escape of an SGD, and (ii) the SGD's noise structure cancels the effect and exponentially accelerates the escape. We also conduct experiments to empirically validate our theory using neural networks trained with real data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge