QGNN: Value Function Factorisation with Graph Neural Networks

Paper and Code

May 25, 2022

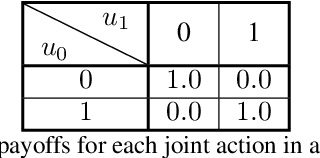

In multi-agent reinforcement learning, the use of a global objective is a powerful tool for incentivising cooperation. Unfortunately, it is not sample-efficient to train individual agents with a global reward, because it does not necessarily correlate with an agent's individual actions. This problem can be solved by factorising the global value function into local value functions. Early work in this domain performed factorisation by conditioning local value functions purely on local information. Recently, it has been shown that providing both local information and an encoding of the global state can promote cooperative behaviour. In this paper we propose QGNN, the first value factorisation method to use a graph neural network (GNN) based model. The multi-layer message passing architecture of QGNN provides more representational complexity than models in prior work, allowing it to produce a more effective factorisation. QGNN also introduces a permutation invariant mixer which is able to match the performance of other methods, even with significantly fewer parameters. We evaluate our method against several baselines, including QMIX-Att, GraphMIX, QMIX, VDN, and hybrid architectures. Our experiments include Starcraft, the standard benchmark for credit assignment; Estimate Game, a custom environment that explicitly models inter-agent dependencies; and Coalition Structure Generation, a foundational problem with real-world applications. The results show that QGNN outperforms state-of-the-art value factorisation baselines consistently.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge