Pseudo-Rehearsal: Achieving Deep Reinforcement Learning without Catastrophic Forgetting

Paper and Code

Dec 06, 2018

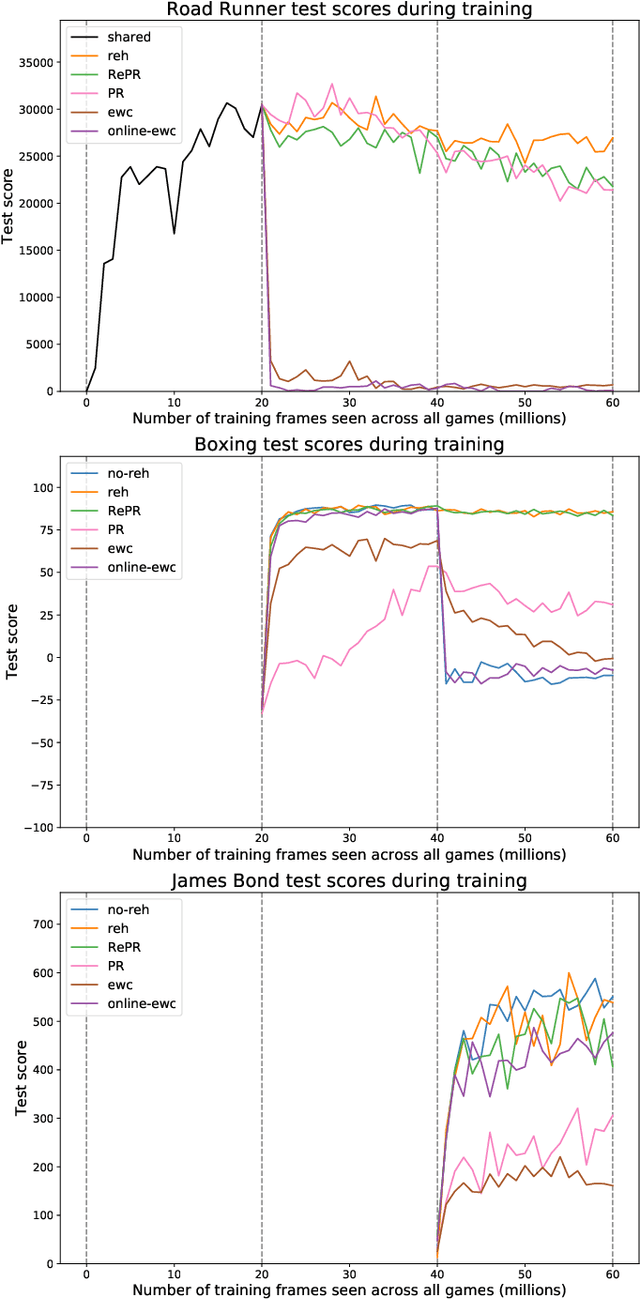

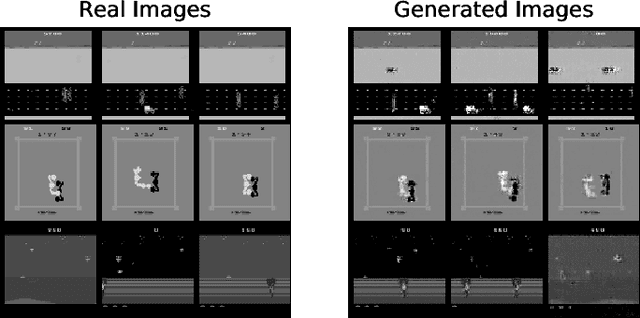

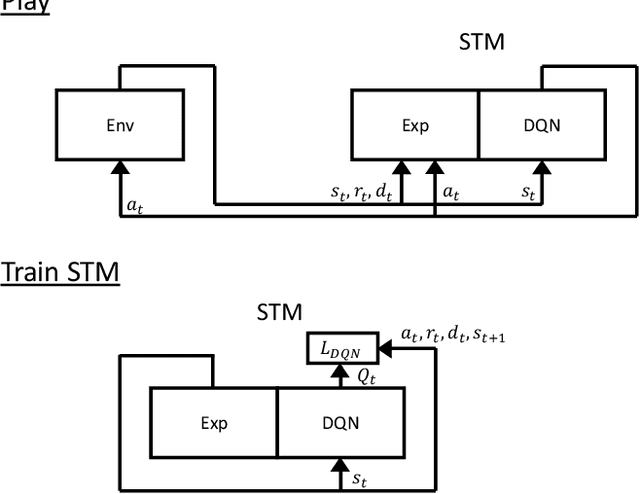

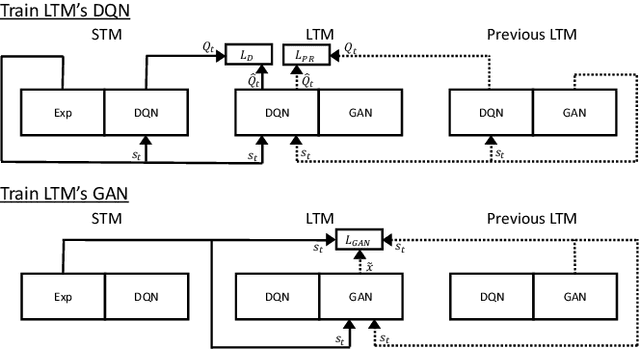

Neural networks can achieve extraordinary results on a wide variety of tasks. However, when they attempt to sequentially learn a number of tasks, they tend to learn the new task while destructively forgetting previous tasks. One solution to this problem is pseudo-rehearsal, which involves learning the new task while rehearsing generated items representative of previous task/s. We demonstrate that pairing pseudo-rehearsal methods with a generative network is an effective solution to this problem in reinforcement learning. Our method iteratively learns three Atari 2600 games while retaining above human level performance on all three games, performing similar to a network which rehearses real examples from all previously learnt tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge