Provably robust deep generative models

Paper and Code

Apr 22, 2020

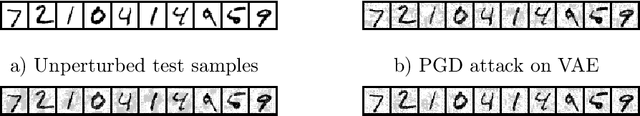

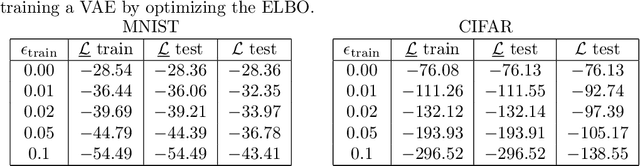

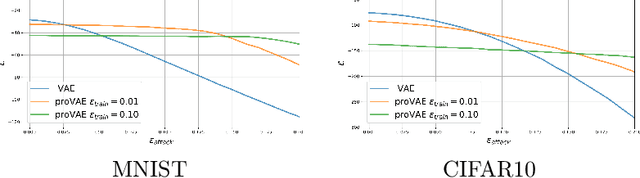

Recent work in adversarial attacks has developed provably robust methods for training deep neural network classifiers. However, although they are often mentioned in the context of robustness, deep generative models themselves have received relatively little attention in terms of formally analyzing their robustness properties. In this paper, we propose a method for training provably robust generative models, specifically a provably robust version of the variational auto-encoder (VAE). To do so, we first formally define a (certifiably) robust lower bound on the variational lower bound of the likelihood, and then show how this bound can be optimized during training to produce a robust VAE. We evaluate the method on simple examples, and show that it is able to produce generative models that are substantially more robust to adversarial attacks (i.e., an adversary trying to perturb inputs so as to drastically lower their likelihood under the model).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge