Provable Convergence of Nesterov Accelerated Method for Over-Parameterized Neural Networks

Paper and Code

Jul 05, 2021

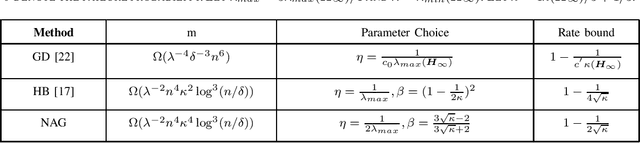

Despite the empirical success of deep learning, it still lacks theoretical understandings to explain why randomly initialized neural network trained by first-order optimization methods is able to achieve zero training loss, even though its landscape is non-convex and non-smooth. Recently, there are some works to demystifies this phenomenon under over-parameterized regime. In this work, we make further progress on this area by considering a commonly used momentum optimization algorithm: Nesterov accelerated method (NAG). We analyze the convergence of NAG for two-layer fully connected neural network with ReLU activation. Specifically, we prove that the error of NAG converges to zero at a linear convergence rate $1-\Theta(1/\sqrt{\kappa})$, where $\kappa > 1$ is determined by the initialization and the architecture of neural network. Comparing to the rate $1-\Theta(1/\kappa)$ of gradient descent, NAG achieves an acceleration. Besides, it also validates NAG and Heavy-ball method can achieve a similar convergence rate.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge