Prototype-based interpretation of the functionality of neurons in winner-take-all neural networks

Paper and Code

Aug 20, 2020

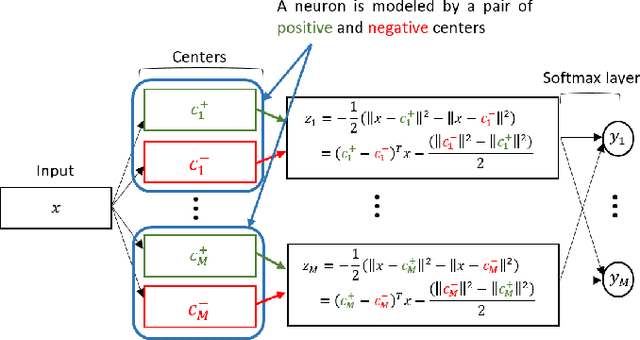

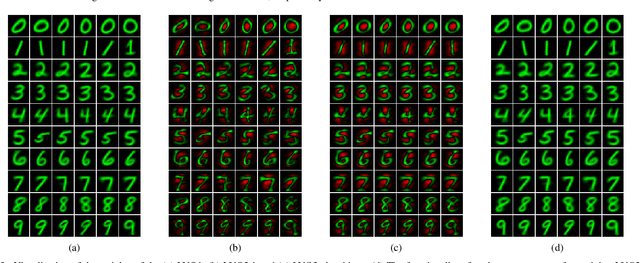

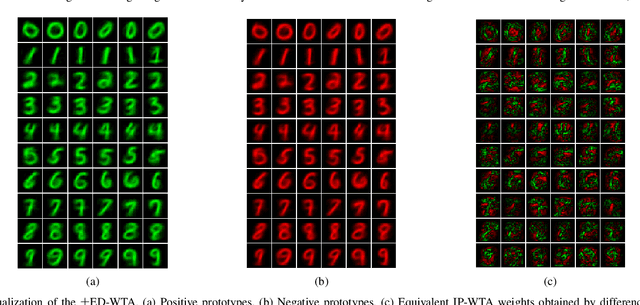

Prototype-based learning (PbL) using a winner-take-all (WTA) network based on minimum Euclidean distance (ED-WTA) is an intuitive approach to multiclass classification. By constructing meaningful class centers, PbL provides higher interpretability and generalization than hyperplane-based learning (HbL) methods based on maximum Inner Product (IP-WTA) and can efficiently detect and reject samples that do not belong to any classes. In this paper, we first prove the equivalence of IP-WTA and ED-WTA from a representational point of view. Then, we show that naively using this equivalence leads to unintuitive ED-WTA networks in which the centers have high distances to data that they represent. We propose $\pm$ED-WTA which models each neuron with two prototypes: one positive prototype representing samples that are modeled by this neuron and a negative prototype representing the samples that are erroneously won by that neuron during training. We propose a novel training algorithm for the $\pm$ED-WTA network, which cleverly switches between updating the positive and negative prototypes and is essential to the emergence of interpretable prototypes. Unexpectedly, we observed that the negative prototype of each neuron is indistinguishably similar to the positive one. The rationale behind this observation is that the training data that are mistaken with a prototype are indeed similar to it. The main finding of this paper is this interpretation of the functionality of neurons as computing the difference between the distances to a positive and a negative prototype, which is in agreement with the BCM theory. In our experiments, we show that the proposed $\pm$ED-WTA method constructs highly interpretable prototypes that can be successfully used for detecting outlier and adversarial examples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge