Prosocial Norm Emergence in Multiagent Systems

Paper and Code

Dec 29, 2020

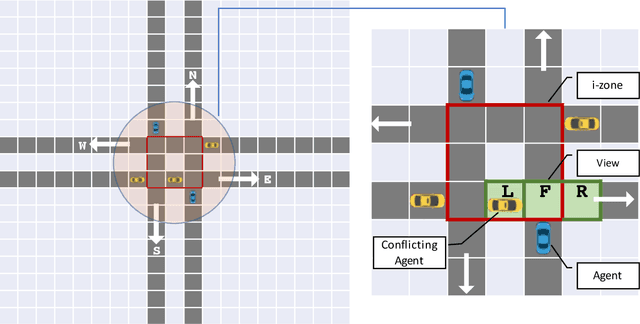

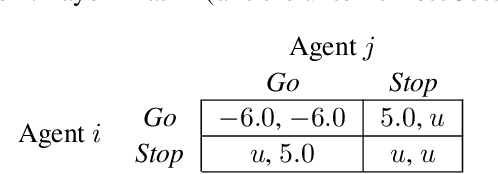

Multiagent systems provide a basis of developing systems of autonomous entities and thus find application in a variety of domains. We consider a setting where not only the member agents are adaptive but also the multiagent system itself is adaptive. Specifically, the social structure of a multiagent system can be reflected in the social norms among its members. It is well recognized that the norms that arise in society are not always beneficial to its members. We focus on prosocial norms, which help achieve positive outcomes for society and often provide guidance to agents to act in a manner that takes into account the welfare of others. Specifically, we propose Cha, a framework for the emergence of prosocial norms. Unlike previous norm emergence approaches, Cha supports continual change to a system (agents may enter and leave), and dynamism (norms may change when the environment changes). Importantly, Cha agents incorporate prosocial decision making based on inequity aversion theory, reflecting an intuition of guilt from being antisocial. In this manner, Cha brings together two important themes in prosociality: decision making by individuals and fairness of system-level outcomes. We demonstrate via simulation that Cha can improve aggregate societal gains and fairness of outcomes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge