Profiling AI Models: Towards Efficient Computation Offloading in Heterogeneous Edge AI Systems

Paper and Code

Oct 30, 2024

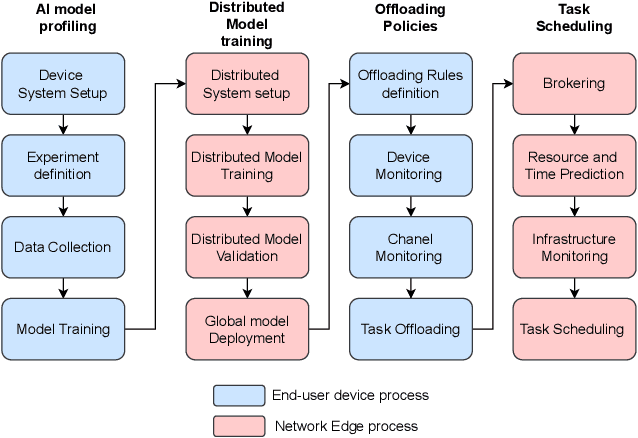

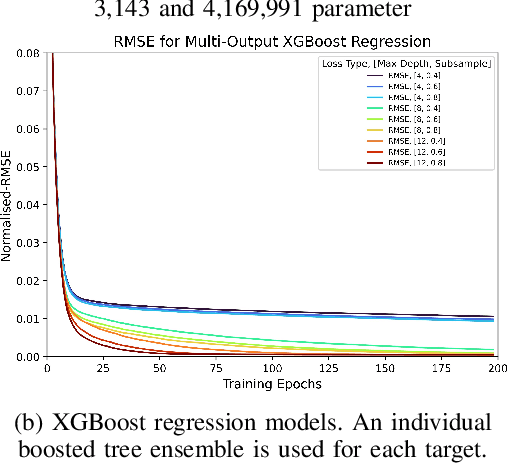

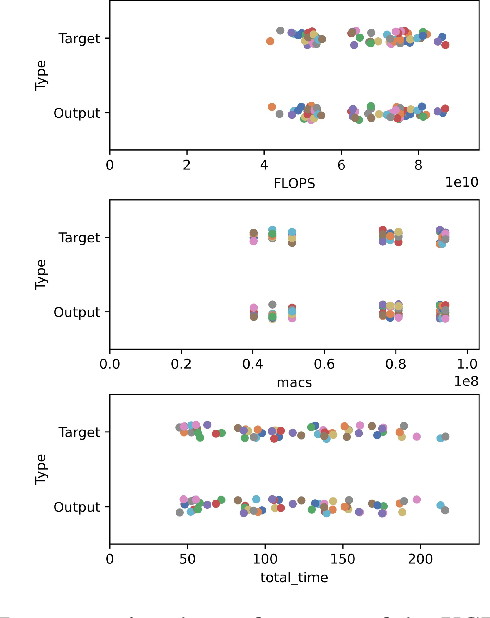

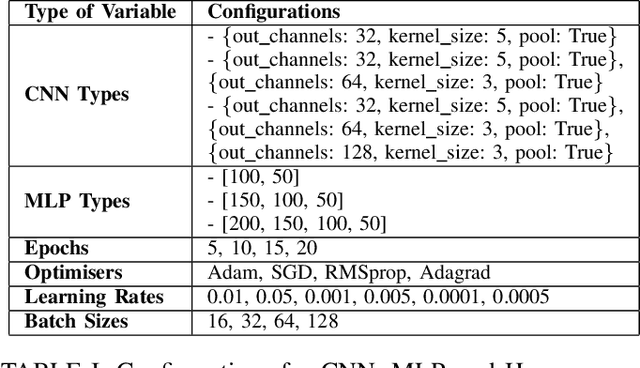

The rapid growth of end-user AI applications, such as computer vision and generative AI, has led to immense data and processing demands often exceeding user devices' capabilities. Edge AI addresses this by offloading computation to the network edge, crucial for future services in 6G networks. However, it faces challenges such as limited resources during simultaneous offloads and the unrealistic assumption of homogeneous system architecture. To address these, we propose a research roadmap focused on profiling AI models, capturing data about model types, hyperparameters, and underlying hardware to predict resource utilisation and task completion time. Initial experiments with over 3,000 runs show promise in optimising resource allocation and enhancing Edge AI performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge