Probably Approximately Correct Explanations of Machine Learning Models via Syntax-Guided Synthesis

Paper and Code

Sep 18, 2020

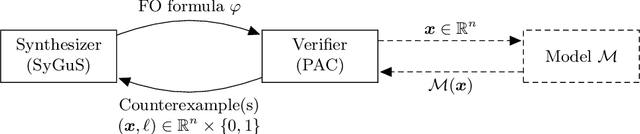

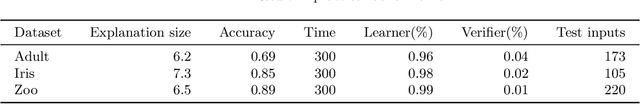

We propose a novel approach to understanding the decision making of complex machine learning models (e.g., deep neural networks) using a combination of probably approximately correct learning (PAC) and a logic inference methodology called syntax-guided synthesis (SyGuS). We prove that our framework produces explanations that with a high probability make only few errors and show empirically that it is effective in generating small, human-interpretable explanations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge