Precision-Recall Curve (PRC) Classification Trees

Paper and Code

Nov 15, 2020

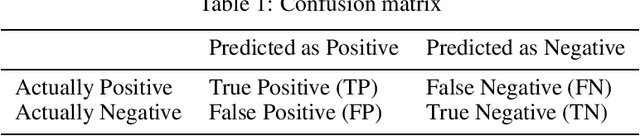

The classification of imbalanced data has presented a significant challenge for most well-known classification algorithms that were often designed for data with relatively balanced class distributions. Nevertheless skewed class distribution is a common feature in real world problems. It is especially prevalent in certain application domains with great need for machine learning and better predictive analysis such as disease diagnosis, fraud detection, bankruptcy prediction, and suspect identification. In this paper, we propose a novel tree-based algorithm based on the area under the precision-recall curve (AUPRC) for variable selection in the classification context. Our algorithm, named as the "Precision-Recall Curve classification tree", or simply the "PRC classification tree" modifies two crucial stages in tree building. The first stage is to maximize the area under the precision-recall curve in node variable selection. The second stage is to maximize the harmonic mean of recall and precision (F-measure) for threshold selection. We found the proposed PRC classification tree, and its subsequent extension, the PRC random forest, work well especially for class-imbalanced data sets. We have demonstrated that our methods outperform their classic counterparts, the usual CART and random forest for both synthetic and real data. Furthermore, the ROC classification tree proposed by our group previously has shown good performance in imbalanced data. The combination of them, the PRC-ROC tree, also shows great promise in identifying the minority class.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge