Practical First-Order Bayesian Optimization Algorithms

Paper and Code

Jun 19, 2023

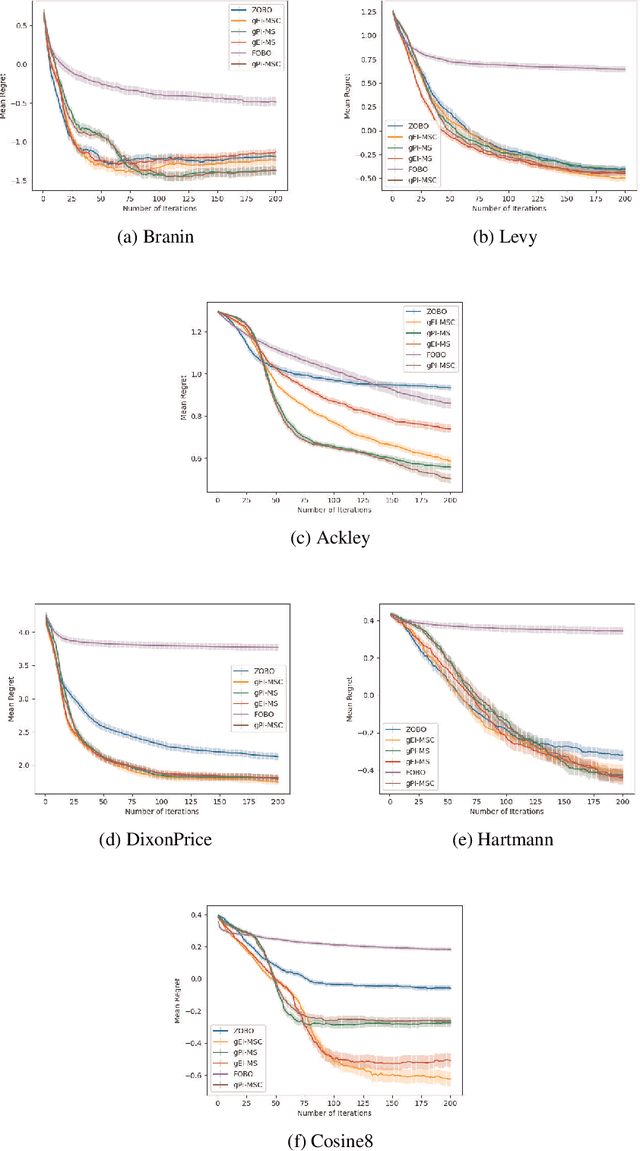

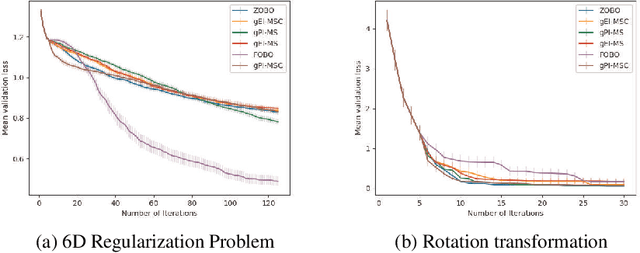

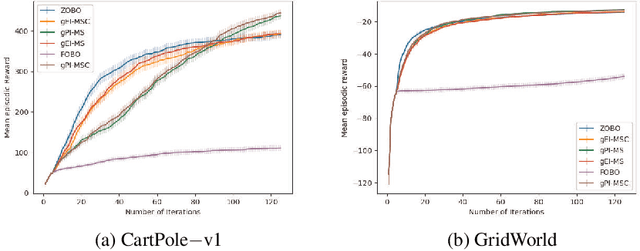

First Order Bayesian Optimization (FOBO) is a sample efficient sequential approach to find the global maxima of an expensive-to-evaluate black-box objective function by suitably querying for the function and its gradient evaluations. Such methods assume Gaussian process (GP) models for both, the function and its gradient, and use them to construct an acquisition function that identifies the next query point. In this paper, we propose a class of practical FOBO algorithms that efficiently utilizes the information from the gradient GP to identify potential query points with zero gradients. We construct a multi-level acquisition function where in the first step, we optimize a lower level acquisition function with multiple restarts to identify potential query points with zero gradient value. We then use the upper level acquisition function to rank these query points based on their function values to potentially identify the global maxima. As a final step, the potential point of maxima is chosen as the actual query point. We validate the performance of our proposed algorithms on several test functions and show that our algorithms outperform state-of-the-art FOBO algorithms. We also illustrate the application of our algorithms in finding optimal set of hyper-parameters in machine learning and in learning the optimal policy in reinforcement learning tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge