Pix2Point: Learning Outdoor 3D Using Sparse Point Clouds and Optimal Transport

Paper and Code

Jul 30, 2021

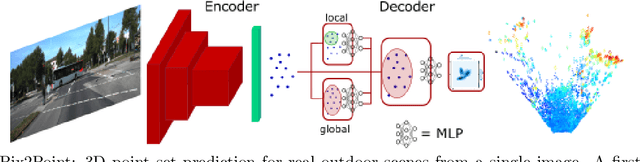

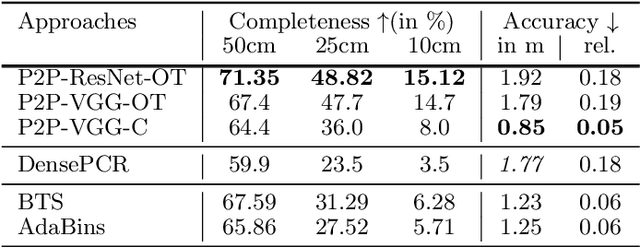

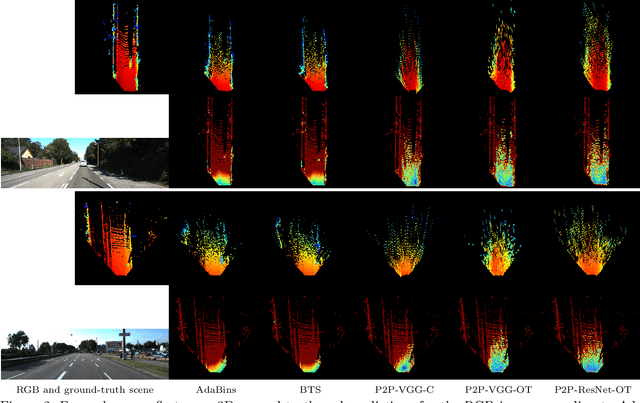

Good quality reconstruction and comprehension of a scene rely on 3D estimation methods. The 3D information was usually obtained from images by stereo-photogrammetry, but deep learning has recently provided us with excellent results for monocular depth estimation. Building up a sufficiently large and rich training dataset to achieve these results requires onerous processing. In this paper, we address the problem of learning outdoor 3D point cloud from monocular data using a sparse ground-truth dataset. We propose Pix2Point, a deep learning-based approach for monocular 3D point cloud prediction, able to deal with complete and challenging outdoor scenes. Our method relies on a 2D-3D hybrid neural network architecture, and a supervised end-to-end minimisation of an optimal transport divergence between point clouds. We show that, when trained on sparse point clouds, our simple promising approach achieves a better coverage of 3D outdoor scenes than efficient monocular depth methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge