PeFAD: A Parameter-Efficient Federated Framework for Time Series Anomaly Detection

Paper and Code

Jun 04, 2024

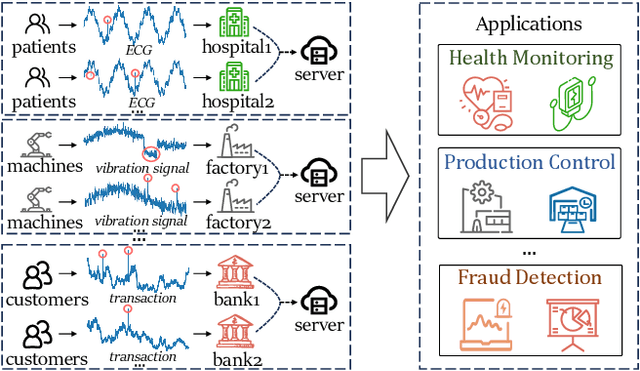

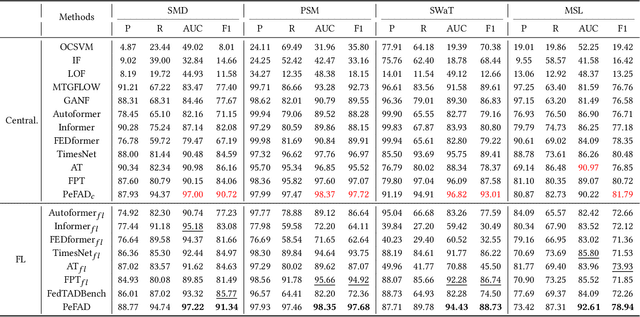

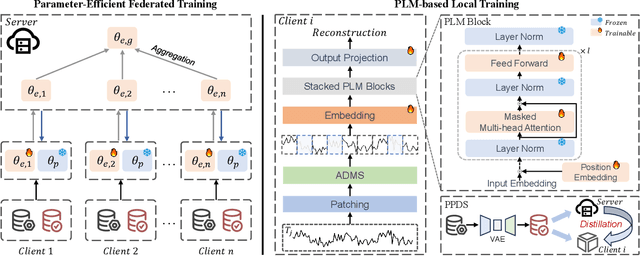

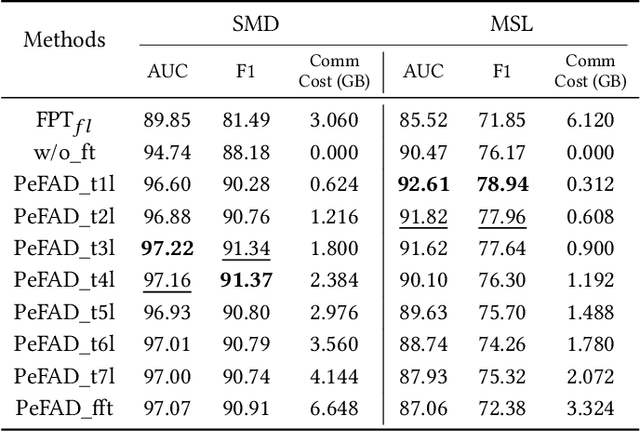

With the proliferation of mobile sensing techniques, huge amounts of time series data are generated and accumulated in various domains, fueling plenty of real-world applications. In this setting, time series anomaly detection is practically important. It endeavors to identify deviant samples from the normal sample distribution in time series. Existing approaches generally assume that all the time series is available at a central location. However, we are witnessing the decentralized collection of time series due to the deployment of various edge devices. To bridge the gap between the decentralized time series data and the centralized anomaly detection algorithms, we propose a Parameter-efficient Federated Anomaly Detection framework named PeFAD with the increasing privacy concerns. PeFAD for the first time employs the pre-trained language model (PLM) as the body of the client's local model, which can benefit from its cross-modality knowledge transfer capability. To reduce the communication overhead and local model adaptation cost, we propose a parameter-efficient federated training module such that clients only need to fine-tune small-scale parameters and transmit them to the server for update. PeFAD utilizes a novel anomaly-driven mask selection strategy to mitigate the impact of neglected anomalies during training. A knowledge distillation operation on a synthetic privacy-preserving dataset that is shared by all the clients is also proposed to address the data heterogeneity issue across clients. We conduct extensive evaluations on four real datasets, where PeFAD outperforms existing state-of-the-art baselines by up to 28.74\%.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge