Pedestrian Behavior Prediction via Multitask Learning and Categorical Interaction Modeling

Paper and Code

Dec 06, 2020

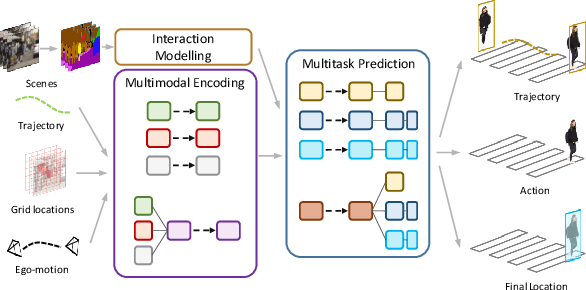

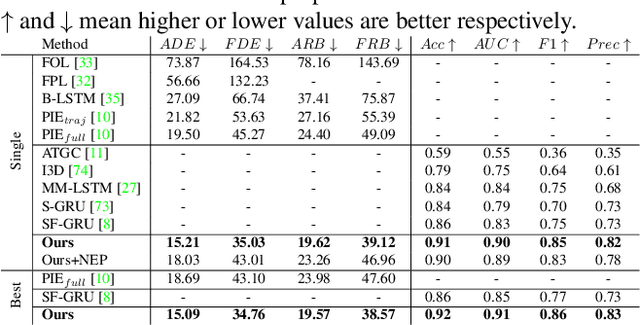

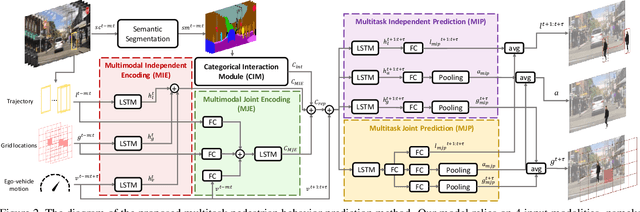

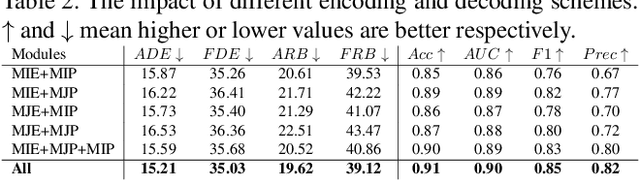

Pedestrian behavior prediction is one of the major challenges for intelligent driving systems. Pedestrians often exhibit complex behaviors influenced by various contextual elements. To address this problem, we propose a multitask learning framework that simultaneously predicts trajectories and actions of pedestrians by relying on multimodal data. Our method benefits from 1) a hybrid mechanism to encode different input modalities independently allowing them to develop their own representations, and jointly to produce a representation for all modalities using shared parameters; 2) a novel interaction modeling technique that relies on categorical semantic parsing of the scenes to capture interactions between target pedestrians and their surroundings; and 3) a dual prediction mechanism that uses both independent and shared decoding of multimodal representations. Using public pedestrian behavior benchmark datasets for driving, PIE and JAAD, we highlight the benefits of multitask learning for behavior prediction and show that our model achieves state-of-the-art performance and improves trajectory and action prediction by up to 22% and 6% respectively. We further investigate the contributions of the proposed processing and interaction modeling techniques via extensive ablation studies.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge