Parameters Sharing Exploration and Hetero-Center based Triplet Loss for Visible-Thermal Person Re-Identification

Paper and Code

Aug 14, 2020

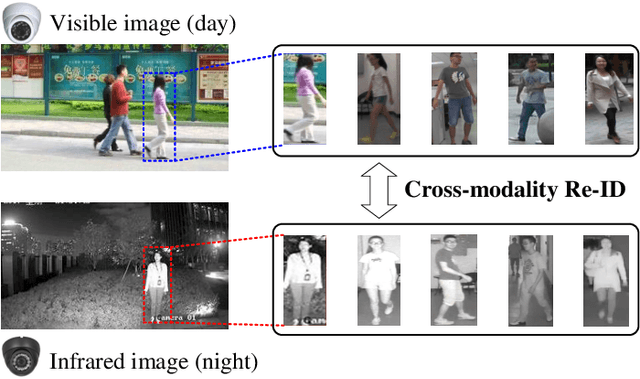

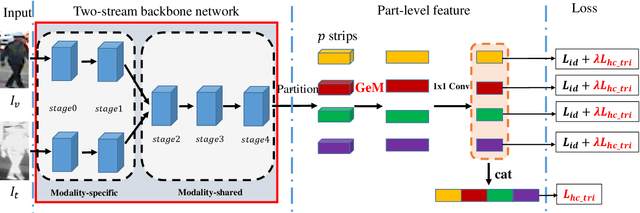

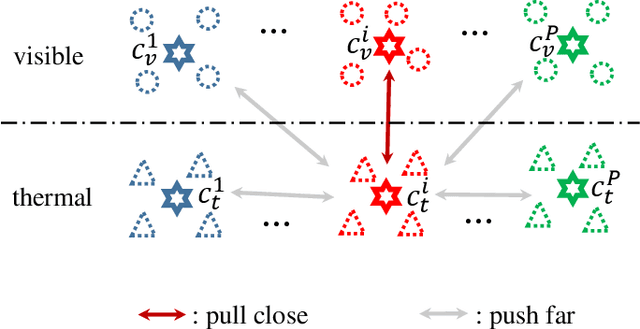

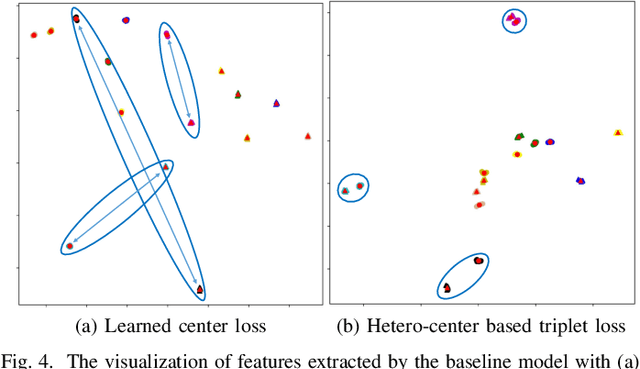

This paper focuses on the visible-thermal cross-modality person re-identification (VT Re-ID) task, whose goal is to match person images between the daytime visible modality and the nighttime thermal modality. The two-stream network is usually adopted to address the cross-modality discrepancy, the most challenging problem for VT Re-ID, by learning the multi-modality person features. In this paper, we explore how many parameters of two-stream network should share, which is still not well investigated in the existing literature. By well splitting the ResNet50 model to construct the modality-specific feature extracting network and modality-sharing feature embedding network, we experimentally demonstrate the effect of parameters sharing of two-stream network for VT Re-ID. Moreover, in the framework of part-level person feature learning, we propose the hetero-center based triplet loss to relax the strict constraint of traditional triplet loss through replacing the comparison of anchor to all the other samples by anchor center to all the other centers. With the extremely simple means, the proposed method can significantly improve the VT Re-ID performance. The experimental results on two datasets show that our proposed method distinctly outperforms the state-of-the-art methods by large margins, especially on RegDB dataset achieving superior performance, rank1/mAP/mINP 91.05%/83.28%/68.84%. It can be a new baseline for VT Re-ID, with simple but effective strategy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge