Outlier-Robust Clustering of Non-Spherical Mixtures

Paper and Code

May 13, 2020

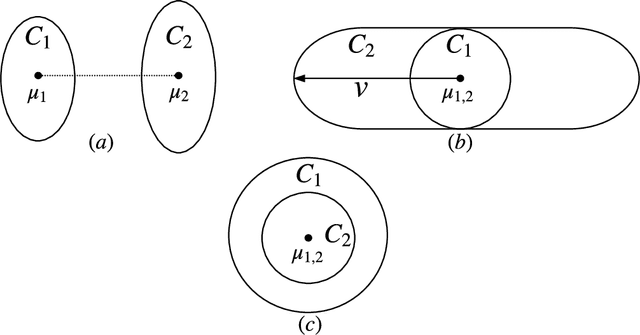

We give the first outlier-robust efficient algorithm for clustering a mixture of $k$ statistically separated d-dimensional Gaussians (k-GMMs). Concretely, our algorithm takes input an $\epsilon$-corrupted sample from a $k$-GMM and whp in $d^{\text{poly}(k/\eta)}$ time, outputs an approximate clustering that misclassifies at most $k^{O(k)}(\epsilon+\eta)$ fraction of the points whenever every pair of mixture components are separated by $1-\exp(-\text{poly}(k/\eta)^k)$ in total variation (TV) distance. Such a result was not previously known even for $k=2$. TV separation is the statistically weakest possible notion of separation and captures important special cases such as mixed linear regression and subspace clustering. Our main conceptual contribution is to distill two simple analytic properties - (certifiable) hypercontractivity and anti-concentration - that are necessary and sufficient for mixture models to be (efficiently) clusterable. As a consequence, our results extend to clustering mixtures of arbitrary affine transforms of the uniform distribution on the $d$-dimensional unit sphere. Even the information theoretic clusterability of separated distributions satisfying these two analytic assumptions was not known prior to our work and is likely to be of independent interest. Our algorithms build on the recent sequence of works relying on certifiable anti-concentration first introduced in [KKK'19,RY'20]. Our techniques expand the sum-of-squares toolkit to show robust certifiability of TV-separated Gaussian clusters in data. This involves giving a low-degree sum-of-squares proof of statements that relate parameter (i.e. mean and covariances) distance to total variation distance by relying only on hypercontractivity and anti-concentration.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge