ORKG-Leaderboards: A Systematic Workflow for Mining Leaderboards as a Knowledge Graph

Paper and Code

May 10, 2023

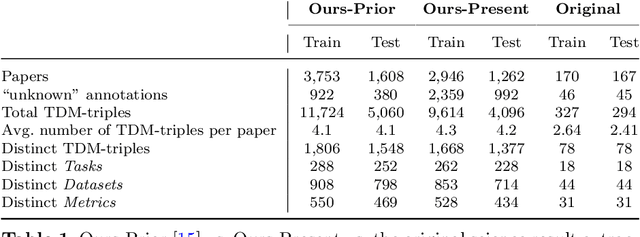

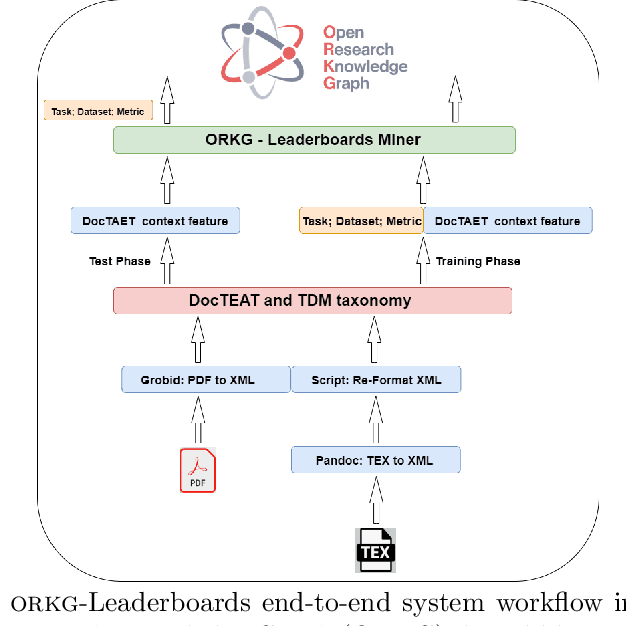

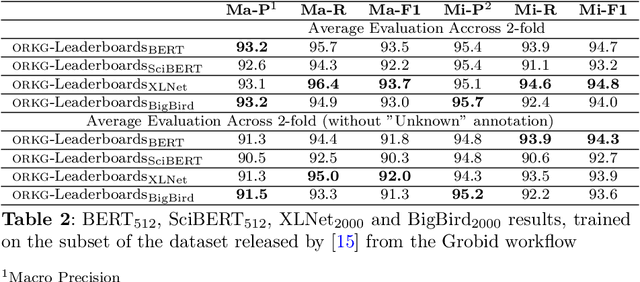

The purpose of this work is to describe the Orkg-Leaderboard software designed to extract leaderboards defined as Task-Dataset-Metric tuples automatically from large collections of empirical research papers in Artificial Intelligence (AI). The software can support both the main workflows of scholarly publishing, viz. as LaTeX files or as PDF files. Furthermore, the system is integrated with the Open Research Knowledge Graph (ORKG) platform, which fosters the machine-actionable publishing of scholarly findings. Thus the system output, when integrated within the ORKG's supported Semantic Web infrastructure of representing machine-actionable 'resources' on the Web, enables: 1) broadly, the integration of empirical results of researchers across the world, thus enabling transparency in empirical research with the potential to also being complete contingent on the underlying data source(s) of publications; and 2) specifically, enables researchers to track the progress in AI with an overview of the state-of-the-art (SOTA) across the most common AI tasks and their corresponding datasets via dynamic ORKG frontend views leveraging tables and visualization charts over the machine-actionable data. Our best model achieves performances above 90% F1 on the \textit{leaderboard} extraction task, thus proving Orkg-Leaderboards a practically viable tool for real-world usage. Going forward, in a sense, Orkg-Leaderboards transforms the leaderboard extraction task to an automated digitalization task, which has been, for a long time in the community, a crowdsourced endeavor.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge