Online Isotonic Regression

Paper and Code

Oct 07, 2016

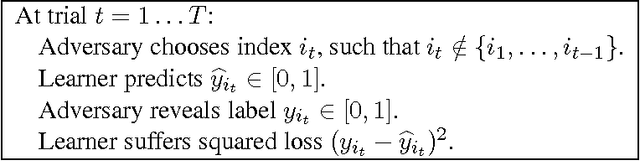

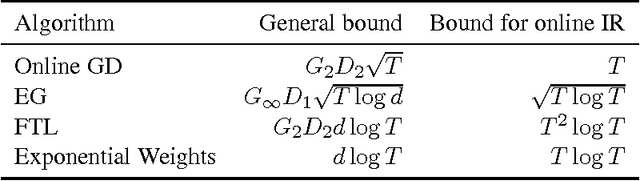

We consider the online version of the isotonic regression problem. Given a set of linearly ordered points (e.g., on the real line), the learner must predict labels sequentially at adversarially chosen positions and is evaluated by her total squared loss compared against the best isotonic (non-decreasing) function in hindsight. We survey several standard online learning algorithms and show that none of them achieve the optimal regret exponent; in fact, most of them (including Online Gradient Descent, Follow the Leader and Exponential Weights) incur linear regret. We then prove that the Exponential Weights algorithm played over a covering net of isotonic functions has a regret bounded by $O\big(T^{1/3} \log^{2/3}(T)\big)$ and present a matching $\Omega(T^{1/3})$ lower bound on regret. We provide a computationally efficient version of this algorithm. We also analyze the noise-free case, in which the revealed labels are isotonic, and show that the bound can be improved to $O(\log T)$ or even to $O(1)$ (when the labels are revealed in isotonic order). Finally, we extend the analysis beyond squared loss and give bounds for entropic loss and absolute loss.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge