On the Study of Sample Complexity for Polynomial Neural Networks

Paper and Code

Jul 18, 2022

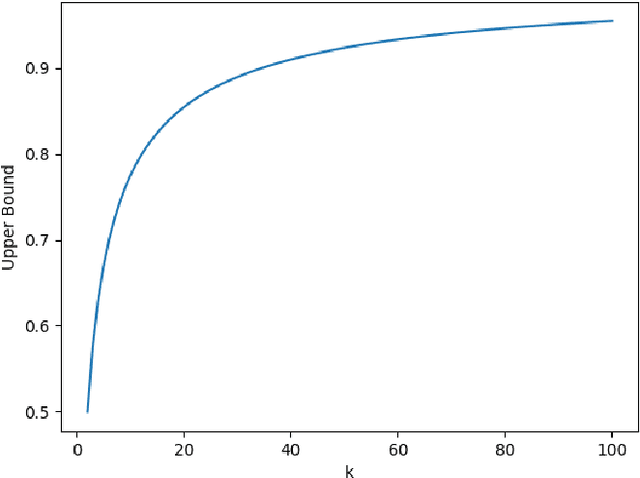

As a general type of machine learning approach, artificial neural networks have established state-of-art benchmarks in many pattern recognition and data analysis tasks. Among various kinds of neural networks architectures, polynomial neural networks (PNNs) have been recently shown to be analyzable by spectrum analysis via neural tangent kernel, and particularly effective at image generation and face recognition. However, acquiring theoretical insight into the computation and sample complexity of PNNs remains an open problem. In this paper, we extend the analysis in previous literature to PNNs and obtain novel results on sample complexity of PNNs, which provides some insights in explaining the generalization ability of PNNs.

* Project paper

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge