On the Structural Sensitivity of Deep Convolutional Networks to the Directions of Fourier Basis Functions

Paper and Code

Sep 11, 2018

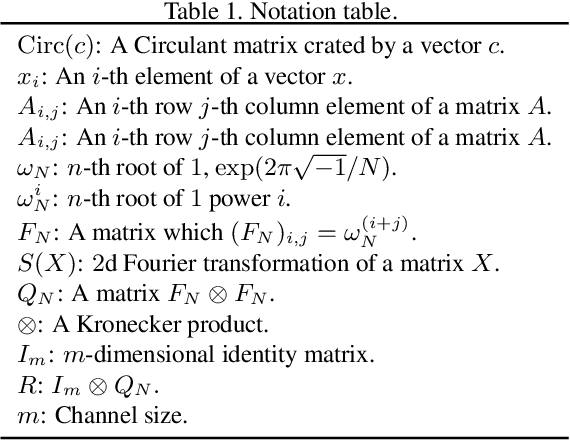

Data-agnostic quasi-imperceptible perturbations on inputs can severely degrade recognition accuracy of deep convolutional networks. This indicates some structural instability of their predictions and poses a potential security threat. However, characterization of the shared directions of such harmful perturbations remains unknown if they exist, which makes it difficult to address the security threat and performance degradation. Our primal finding is that convolutional networks are sensitive to the directions of Fourier basis functions. We derived the property by specializing a hypothesis of the cause of the sensitivity, known as the linearity of neural networks, to convolutional networks and empirically validated it. As a by-product of the analysis, we propose a fast algorithm to create shift-invariant universal adversarial perturbations available in black-box settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge