On the Memorization Properties of Contrastive Learning

Paper and Code

Jul 21, 2021

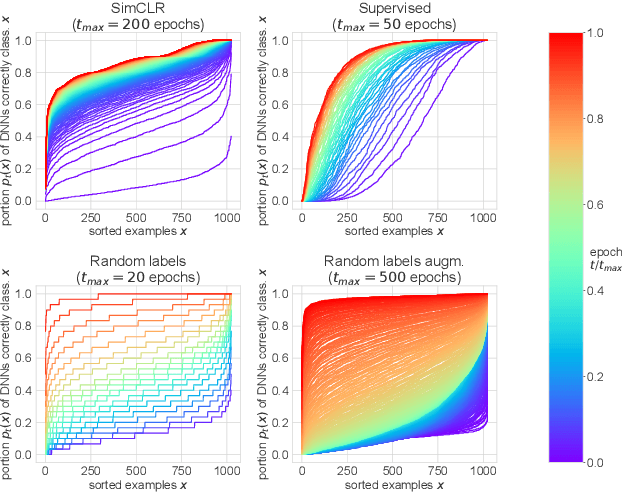

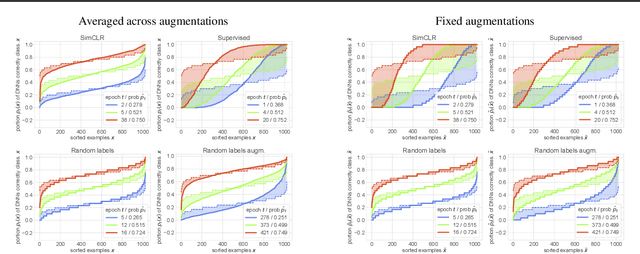

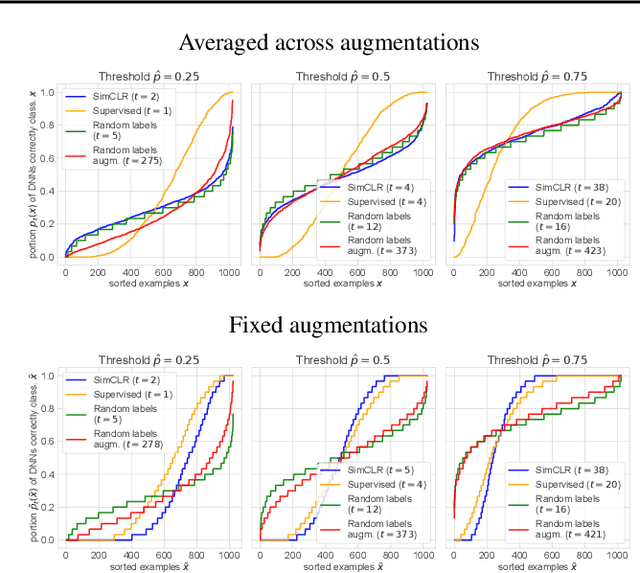

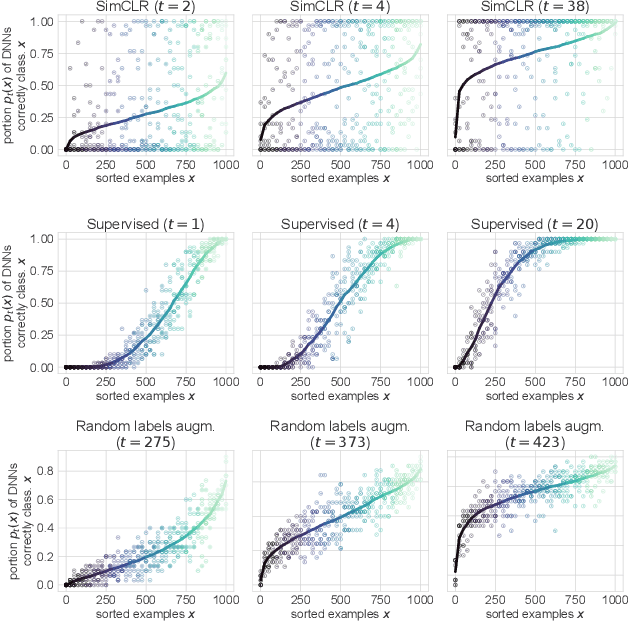

Memorization studies of deep neural networks (DNNs) help to understand what patterns and how do DNNs learn, and motivate improvements to DNN training approaches. In this work, we investigate the memorization properties of SimCLR, a widely used contrastive self-supervised learning approach, and compare them to the memorization of supervised learning and random labels training. We find that both training objects and augmentations may have different complexity in the sense of how SimCLR learns them. Moreover, we show that SimCLR is similar to random labels training in terms of the distribution of training objects complexity.

* Published in Workshop on Overparameterization: Pitfalls &

Opportunities at ICML 2021

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge