On the Importance of Regularisation & Auxiliary Information in OOD Detection

Paper and Code

Jul 15, 2021

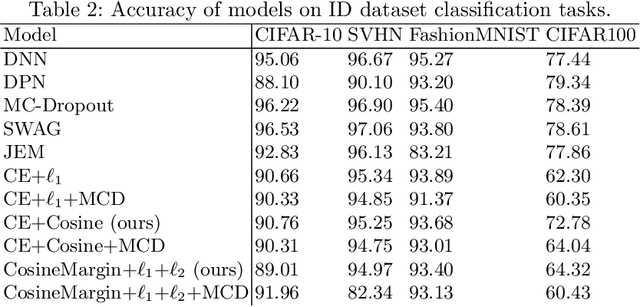

Neural networks are often utilised in critical domain applications (e.g.~self-driving cars, financial markets, and aerospace engineering), even though they exhibit overconfident predictions for ambiguous inputs. This deficiency demonstrates a fundamental flaw indicating that neural networks often overfit on spurious correlations. To address this problem in this work we present two novel objectives that improve the ability of a network to detect out-of-distribution samples and therefore avoid overconfident predictions for ambiguous inputs. We empirically demonstrate that our methods outperform the baseline and perform better than the majority of existing approaches, while performing competitively those that they don't outperform. Additionally, we empirically demonstrate the robustness of our approach against common corruptions and demonstrate the importance of regularisation and auxiliary information in out-of-distribution detection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge