On the Convergence of Policy in Unregularized Policy Mirror Descent

Paper and Code

May 19, 2022

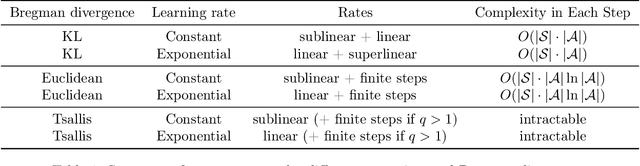

In this short note, we give the convergence analysis of the policy in the recent famous policy mirror descent (PMD). We mainly consider the unregularized setting following [11] with generalized Bregman divergence. The difference is that we directly give the convergence rates of policy under generalized Bregman divergence. Our results are inspired by the convergence of value function in previous works and are an extension study of policy mirror descent. Though some results have already appeared in previous work, we further discover a large body of Bregman divergences could give finite-step convergence to an optimal policy, such as the classical Euclidean distance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge