On learning parametric distributions from quantized samples

Paper and Code

May 25, 2021

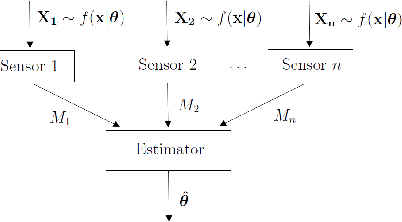

We consider the problem of learning parametric distributions from their quantized samples in a network. Specifically, $n$ agents or sensors observe independent samples of an unknown parametric distribution; and each of them uses $k$ bits to describe its observed sample to a central processor whose goal is to estimate the unknown distribution. First, we establish a generalization of the well-known van Trees inequality to general $L_p$-norms, with $p > 1$, in terms of Generalized Fisher information. Then, we develop minimax lower bounds on the estimation error for two losses: general $L_p$-norms and the related Wasserstein loss from optimal transport.

* Accepted for publication at the IEEE Information Theory Symposium

(ISIT) 2021

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge