On Adaptivity in Information-constrained Online Learning

Paper and Code

Oct 19, 2019

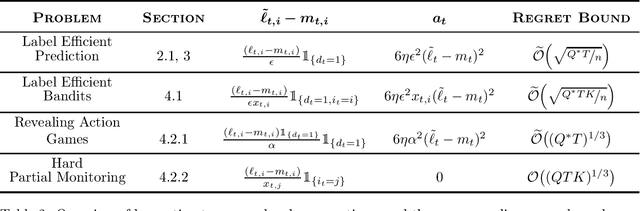

We study how to adapt to smoothly-varying (`easy') environments in well-known online learning problems where acquiring information is expensive. For the problem of label efficient prediction, which is a budgeted version of prediction with expert advice, we present an online algorithm whose regret depends optimally on the number of labels allowed and $Q^*$ (the quadratic variation of the losses of the best action in hindsight), along with a parameter-free counterpart whose regret depends optimally on $Q$ (the quadratic variation of the losses of all the actions). These quantities can be significantly smaller than $T$ (the total time horizon), yielding an improvement over existing, variation-independent results for the problem. We then extend our analysis to handle label efficient prediction with bandit feedback, i.e., label efficient bandits. Our work builds upon the framework of optimistic online mirror descent, and leverages second order corrections along with a carefully designed hybrid regularizer that encodes the constrained information structure of the problem. We then consider revealing action-partial monitoring games -- a version of label efficient prediction with additive information costs, which in general are known to lie in the \textit{hard} class of games having minimax regret of order $T^{\frac{2}{3}}$. We provide a strategy with an $\mathcal{O}((Q^*T)^{\frac{1}{3}})$ bound for revealing action games, along with an one with a $\mathcal{O}((QT)^{\frac{1}{3}})$ bound for the full class of hard partial monitoring games, both being strict improvements over current bounds.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge