Nutrition Estimation for Dietary Management: A Transformer Approach with Depth Sensing

Paper and Code

Jun 04, 2024

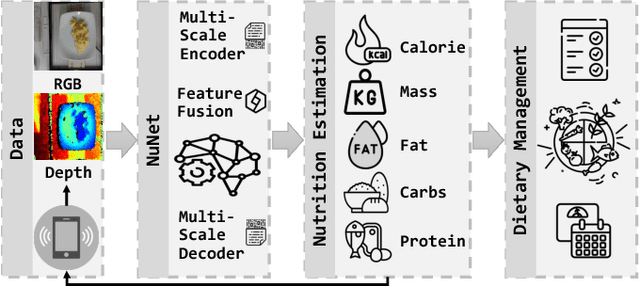

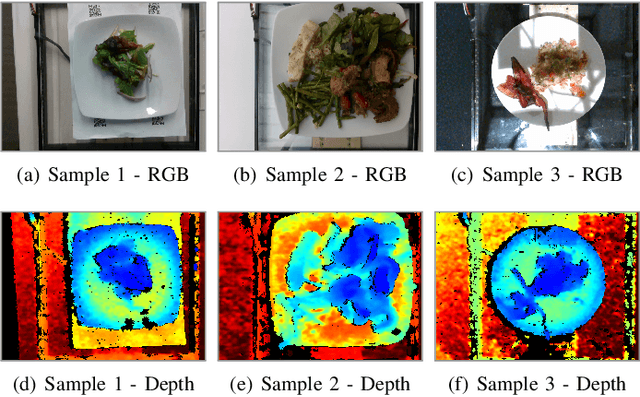

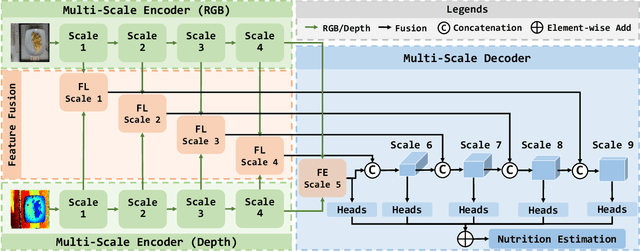

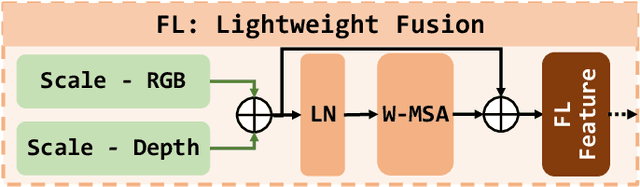

Nutrition estimation is crucial for effective dietary management and overall health and well-being. Existing methods often struggle with sub-optimal accuracy and can be time-consuming. In this paper, we propose NuNet, a transformer-based network designed for nutrition estimation that utilizes both RGB and depth information from food images. We have designed and implemented a multi-scale encoder and decoder, along with two types of feature fusion modules, specialized for estimating five nutritional factors. These modules effectively balance the efficiency and effectiveness of feature extraction with flexible usage of our customized attention mechanisms and fusion strategies. Our experimental study shows that NuNet outperforms its variants and existing solutions significantly for nutrition estimation. It achieves an error rate of 15.65%, the lowest known to us, largely due to our multi-scale architecture and fusion modules. This research holds practical values for dietary management with huge potential for transnational research and deployment and could inspire other applications involving multiple data types with varying degrees of importance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge