Neural Ordinary Differential Equations on Manifolds

Paper and Code

Jun 11, 2020

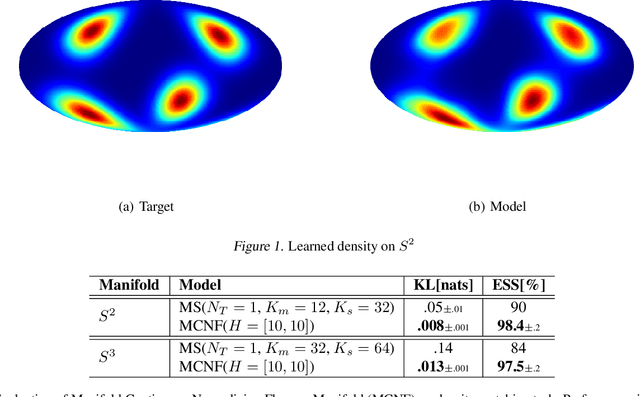

Normalizing flows are a powerful technique for obtaining reparameterizable samples from complex multimodal distributions. Unfortunately current approaches fall short when the underlying space has a non trivial topology, and are only available for the most basic geometries. Recently normalizing flows in Euclidean space based on Neural ODEs show great promise, yet suffer the same limitations. Using ideas from differential geometry and geometric control theory, we describe how neural ODEs can be extended to smooth manifolds. We show how vector fields provide a general framework for parameterizing a flexible class of invertible mapping on these spaces and we illustrate how gradient based learning can be performed. As a result we define a general methodology for building normalizing flows on manifolds.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge