Neural Abstract Reasoner

Paper and Code

Nov 12, 2020

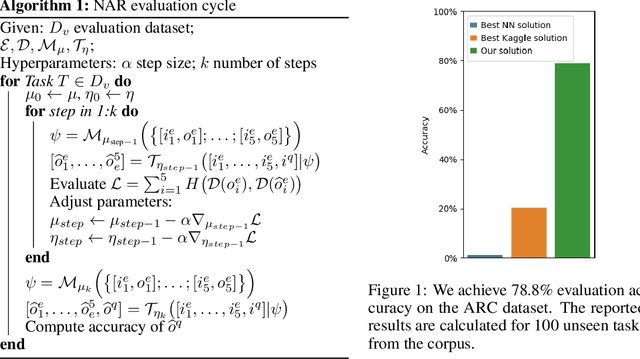

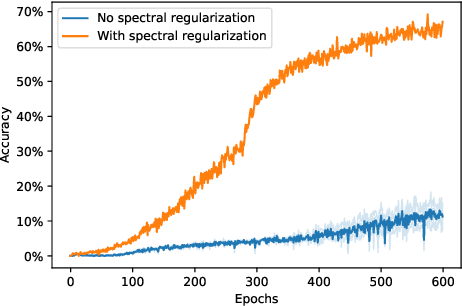

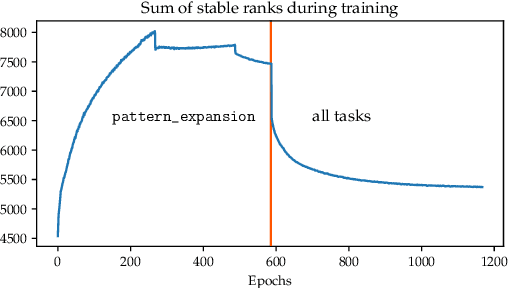

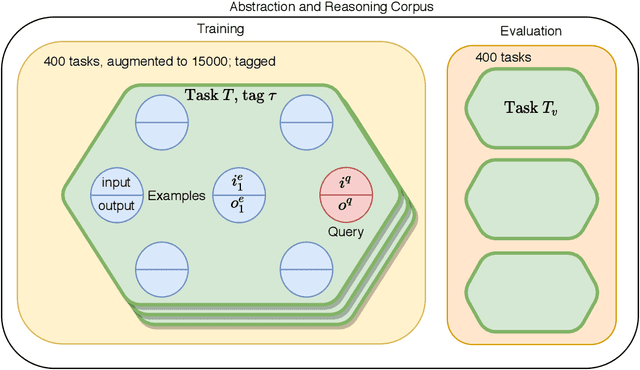

Abstract reasoning and logic inference are difficult problems for neural networks, yet essential to their applicability in highly structured domains. In this work we demonstrate that a well known technique such as spectral regularization can significantly boost the capabilities of a neural learner. We introduce the Neural Abstract Reasoner (NAR), a memory augmented architecture capable of learning and using abstract rules. We show that, when trained with spectral regularization, NAR achieves $78.8\%$ accuracy on the Abstraction and Reasoning Corpus, improving performance 4 times over the best known human hand-crafted symbolic solvers. We provide some intuition for the effects of spectral regularization in the domain of abstract reasoning based on theoretical generalization bounds and Solomonoff's theory of inductive inference.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge