Multimodal-Boost: Multimodal Medical Image Super-Resolution using Multi-Attention Network with Wavelet Transform

Paper and Code

Oct 22, 2021

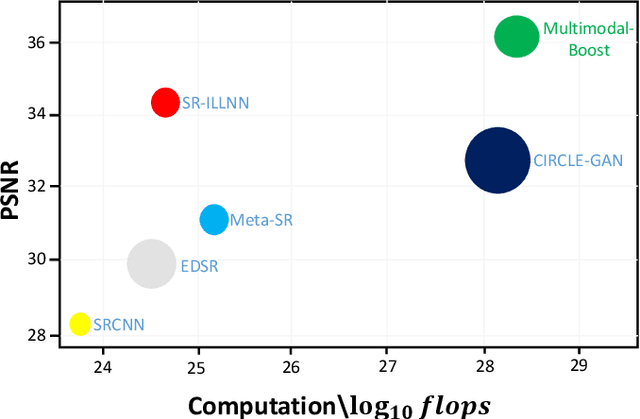

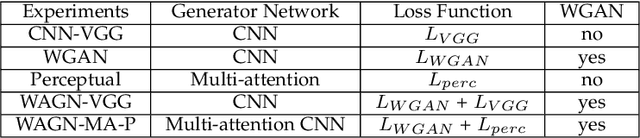

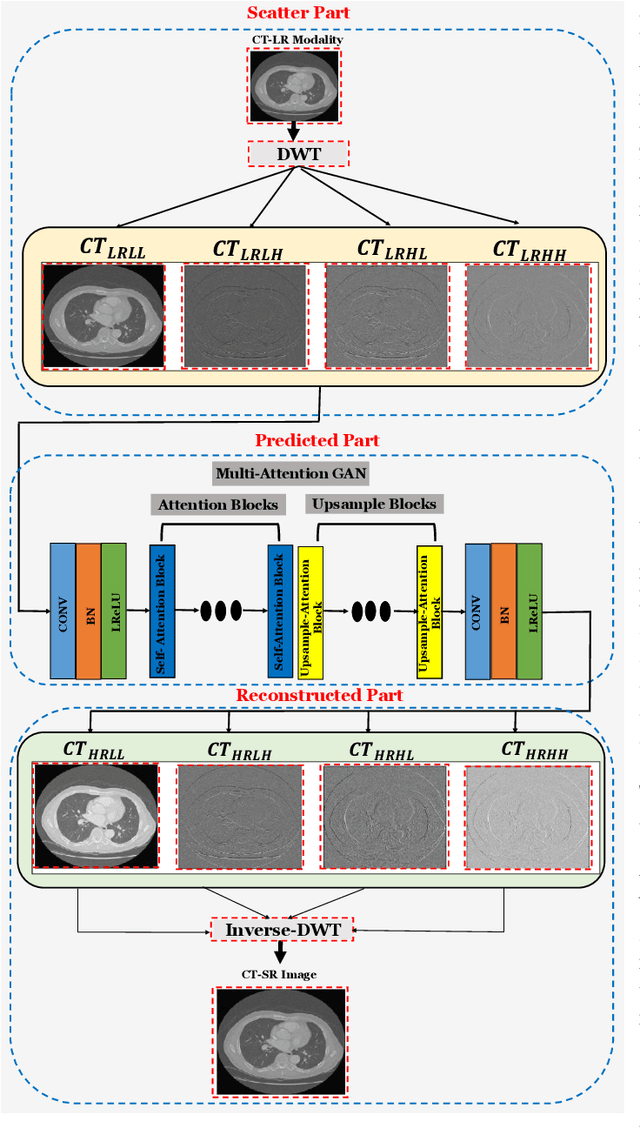

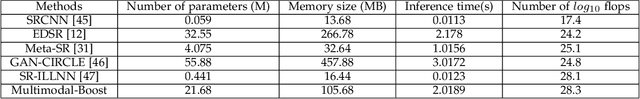

Multimodal medical images are widely used by clinicians and physicians to analyze and retrieve complementary information from high-resolution images in a non-invasive manner. The loss of corresponding image resolution degrades the overall performance of medical image diagnosis. Deep learning based single image super resolution (SISR) algorithms has revolutionized the overall diagnosis framework by continually improving the architectural components and training strategies associated with convolutional neural networks (CNN) on low-resolution images. However, existing work lacks in two ways: i) the SR output produced exhibits poor texture details, and often produce blurred edges, ii) most of the models have been developed for a single modality, hence, require modification to adapt to a new one. This work addresses (i) by proposing generative adversarial network (GAN) with deep multi-attention modules to learn high-frequency information from low-frequency data. Existing approaches based on the GAN have yielded good SR results; however, the texture details of their SR output have been experimentally confirmed to be deficient for medical images particularly. The integration of wavelet transform (WT) and GANs in our proposed SR model addresses the aforementioned limitation concerning textons. The WT divides the LR image into multiple frequency bands, while the transferred GAN utilizes multiple attention and upsample blocks to predict high-frequency components. Moreover, we present a learning technique for training a domain-specific classifier as a perceptual loss function. Combining multi-attention GAN loss with a perceptual loss function results in a reliable and efficient performance. Applying the same model for medical images from diverse modalities is challenging, our work addresses (ii) by training and performing on several modalities via transfer learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge