Multifunctionality in a Reservoir Computer

Paper and Code

Aug 10, 2020

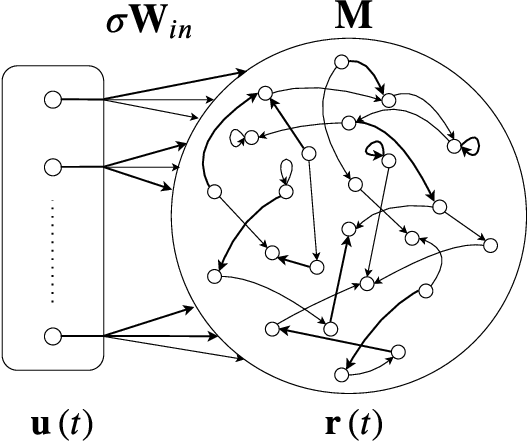

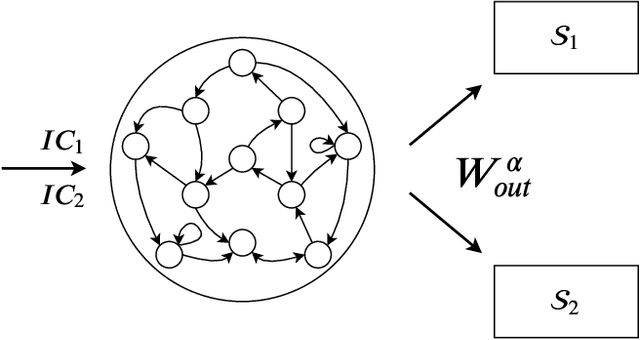

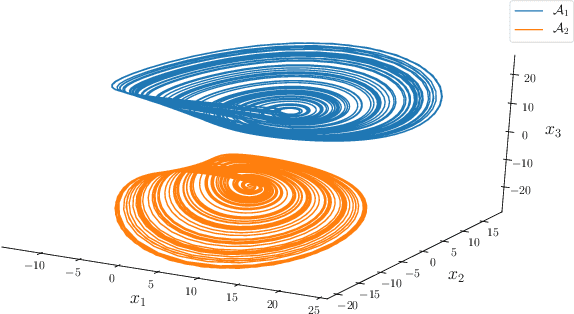

Multifunctionality is a well observed phenomenological feature of biological neural networks and considered to be of fundamental importance to the survival of certain species over time. These multifunctional neural networks are capable of performing more than one task without changing any network connections. In this paper we investigate how this neurological idiosyncrasy can be achieved in an artificial setting with a modern machine learning paradigm known as `Reservoir Computing'. A training technique is designed to enable a Reservoir Computer to perform tasks of a multifunctional nature. We explore the critical effects that changes in certain parameters can have on the Reservoir Computers' ability to express multifunctionality. We also expose the existence of several `untrained attractors'; attractors which dwell within the prediction state space of the Reservoir Computer that were not part of the training. We conduct a bifurcation analysis of these untrained attractors and discuss the implications of our results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge