Multi-modality Meets Re-learning: Mitigating Negative Transfer in Sequential Recommendation

Paper and Code

Sep 20, 2023

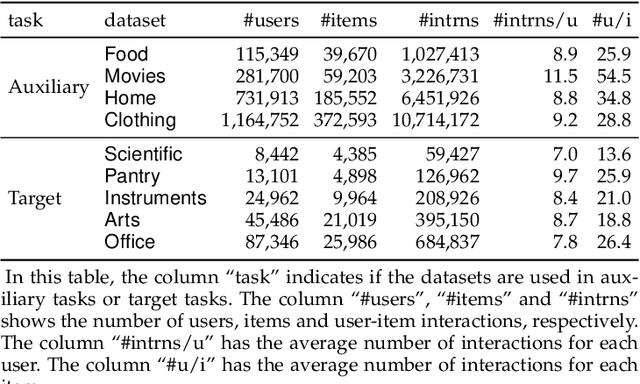

Learning effective recommendation models from sparse user interactions represents a fundamental challenge in developing sequential recommendation methods. Recently, pre-training-based methods have been developed to tackle this challenge. Though promising, in this paper, we show that existing methods suffer from the notorious negative transfer issue, where the model adapted from the pre-trained model results in worse performance compared to the model learned from scratch in the task of interest (i.e., target task). To address this issue, we develop a method, denoted as ANT, for transferable sequential recommendation. ANT mitigates negative transfer by 1) incorporating multi-modality item information, including item texts, images and prices, to effectively learn more transferable knowledge from related tasks (i.e., auxiliary tasks); and 2) better capturing task-specific knowledge in the target task using a re-learning-based adaptation strategy. We evaluate ANT against eight state-of-the-art baseline methods on five target tasks. Our experimental results demonstrate that ANT does not suffer from the negative transfer issue on any of the target tasks. The results also demonstrate that ANT substantially outperforms baseline methods in the target tasks with an improvement of as much as 15.2%. Our analysis highlights the superior effectiveness of our re-learning-based strategy compared to fine-tuning on the target tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge