Multi-Frequency Information Enhanced Channel Attention Module for Speaker Representation Learning

Paper and Code

Jul 10, 2022

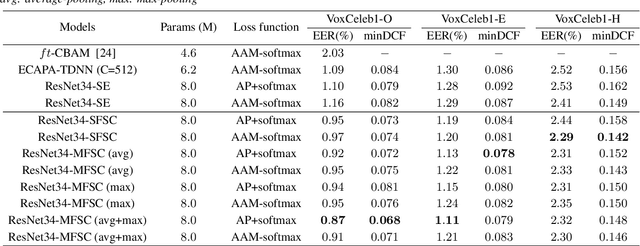

Recently, attention mechanisms have been applied successfully in neural network-based speaker verification systems. Incorporating the Squeeze-and-Excitation block into convolutional neural networks has achieved remarkable performance. However, it uses global average pooling (GAP) to simply average the features along time and frequency dimensions, which is incapable of preserving sufficient speaker information in the feature maps. In this study, we show that GAP is a special case of a discrete cosine transform (DCT) on time-frequency domain mathematically using only the lowest frequency component in frequency decomposition. To strengthen the speaker information extraction ability, we propose to utilize multi-frequency information and design two novel and effective attention modules, called Single-Frequency Single-Channel (SFSC) attention module and Multi-Frequency Single-Channel (MFSC) attention module. The proposed attention modules can effectively capture more speaker information from multiple frequency components on the basis of DCT. We conduct comprehensive experiments on the VoxCeleb datasets and a probe evaluation on the 1st 48-UTD forensic corpus. Experimental results demonstrate that our proposed SFSC and MFSC attention modules can efficiently generate more discriminative speaker representations and outperform ResNet34-SE and ECAPA-TDNN systems with relative 20.9% and 20.2% reduction in EER, without adding extra network parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge