Multi-Agent Reinforcement Learning for Problems with Combined Individual and Team Reward

Paper and Code

Mar 24, 2020

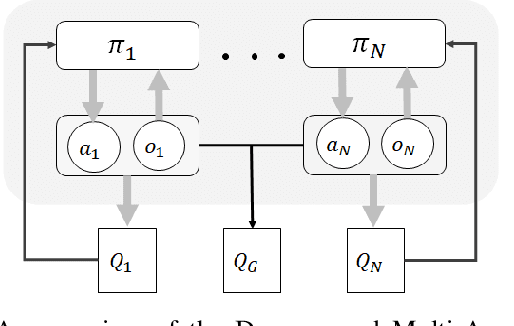

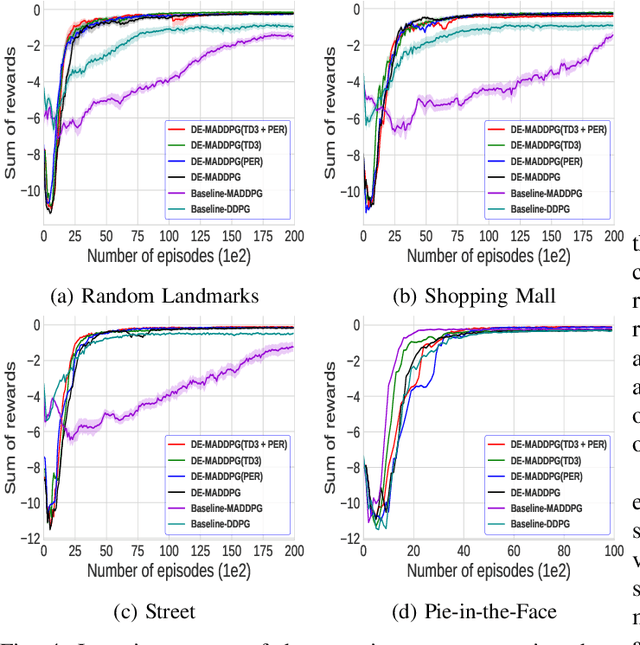

Many cooperative multi-agent problems require agents to learn individual tasks while contributing to the collective success of the group. This is a challenging task for current state-of-the-art multi-agent reinforcement algorithms that are designed to either maximize the global reward of the team or the individual local rewards. The problem is exacerbated when either of the rewards is sparse leading to unstable learning. To address this problem, we present Decomposed Multi-Agent Deep Deterministic Policy Gradient (DE-MADDPG): a novel cooperative multi-agent reinforcement learning framework that simultaneously learns to maximize the global and local rewards. We evaluate our solution on the challenging defensive escort team problem and show that our solution achieves a significantly better and more stable performance than the direct adaptation of the MADDPG algorithm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge