Model Extraction Attacks on Graph Neural Networks: Taxonomy and Realization

Paper and Code

Oct 24, 2020

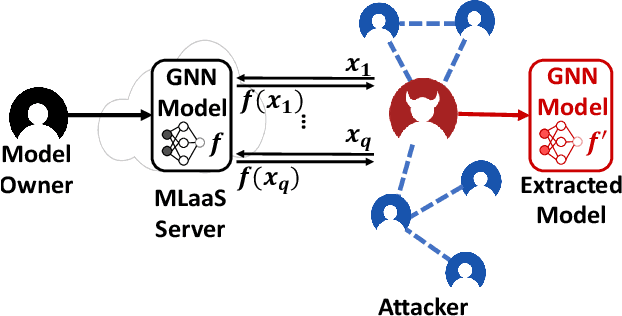

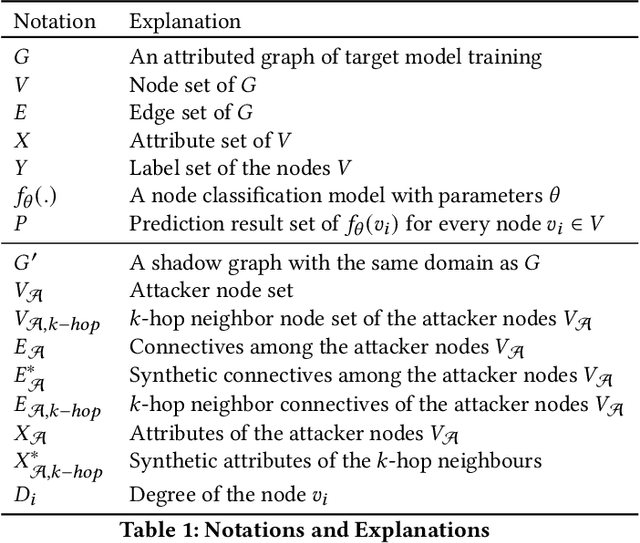

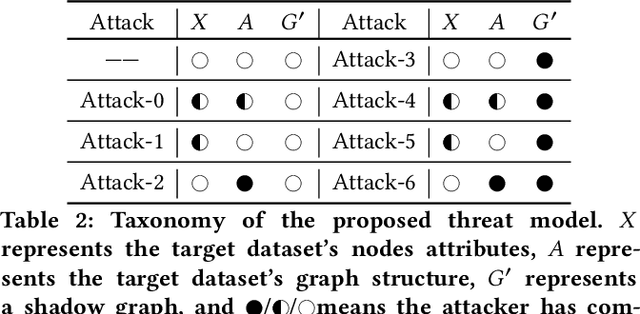

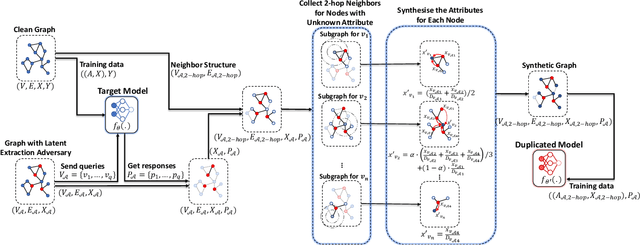

Graph neural networks (GNNs) have been widely used to analyze the graph-structured data in various application domains, e.g., social networks, molecular biology, and anomaly detection. With great power, the GNN models, usually as valuable Intellectual Properties of their owners, also become attractive targets of the attacker. Recent studies show that machine learning models are facing a severe threat called Model Extraction Attacks, where a well-trained private model owned by a service provider can be stolen by the attacker pretending as a client. Unfortunately, existing works focus on the models trained on the Euclidean space, e.g., images and texts, while how to extract a GNN model that contains a graph structure and node features is yet to be explored. In this paper, we explore and develop model extraction attacks against GNN models. Given only black-box access to a target GNN model, the attacker aims to reconstruct a duplicated one via several nodes he obtained (called attacker nodes). We first systematically formalise the threat modeling in the context of GNN model extraction and classify the adversarial threats into seven categories by considering different background knowledge of the attacker, e.g., attributes and/or neighbor connectives of the attacker nodes. Then we present the detailed methods which utilize the accessible knowledge in each threat to implement the attacks. By evaluating over three real-world datasets, our attacks are shown to extract duplicated models effectively, i.e., more than 89% inputs in the target domain have the same output predictions as the victim model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge