MFT: Long-Term Tracking of Every Pixel

Paper and Code

May 22, 2023

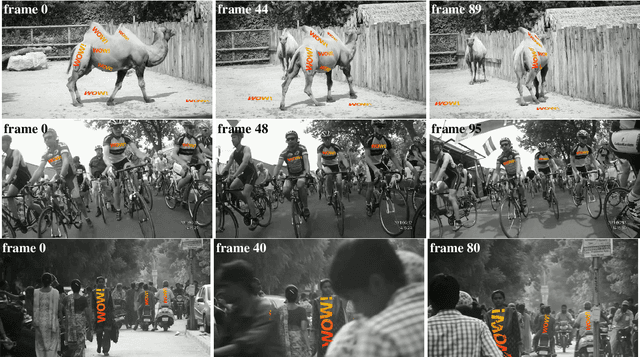

We propose MFT -- Multi-Flow dense Tracker -- a novel method for dense, pixel-level, long-term tracking. The approach exploits optical flows estimated not only between consecutive frames, but also for pairs of frames at logarithmically spaced intervals. It then selects the most reliable sequence of flows on the basis of estimates of its geometric accuracy and the probability of occlusion, both provided by a pre-trained CNN. We show that MFT achieves state-of-the-art results on the TAP-Vid-DAVIS benchmark, outperforming the baselines, their combination, and published methods by a significant margin, achieving an average position accuracy of 70.8%, average Jaccard of 56.1% and average occlusion accuracy of 86.9%. The method is insensitive to medium-length occlusions and it is robustified by estimating flow with respect to the reference frame, which reduces drift.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge