Meta-simulation for the Automated Design of Synthetic Overhead Imagery

Paper and Code

Sep 19, 2022

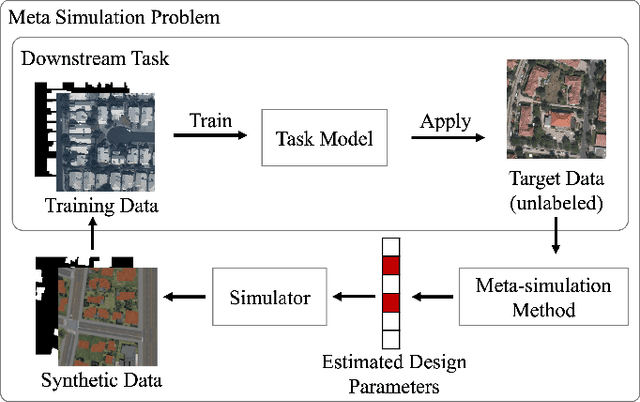

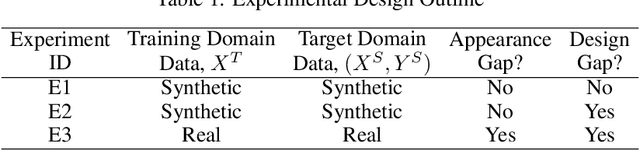

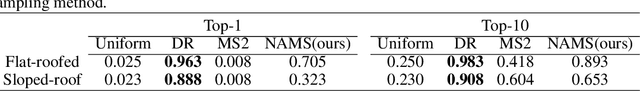

The use of synthetic (or simulated) data for training machine learning models has grown rapidly in recent years. Synthetic data can often be generated much faster and more cheaply than its real-world counterpart. One challenge of using synthetic imagery however is scene design: e.g., the choice of content and its features and spatial arrangement. To be effective, this design must not only be realistic, but appropriate for the target domain, which (by assumption) is unlabeled. In this work, we propose an approach to automatically choose the design of synthetic imagery based upon unlabeled real-world imagery. Our approach, termed Neural-Adjoint Meta-Simulation (NAMS), builds upon the seminal recent meta-simulation approaches. In contrast to the current state-of-the-art methods, our approach can be pre-trained once offline, and then provides fast design inference for new target imagery. Using both synthetic and real-world problems, we show that NAMS infers synthetic designs that match both the in-domain and out-of-domain target imagery, and that training segmentation models with NAMS-designed imagery yields superior results compared to na\"ive randomized designs and state-of-the-art meta-simulation methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge