Memristor Hardware-Friendly Reinforcement Learning

Paper and Code

Jan 20, 2020

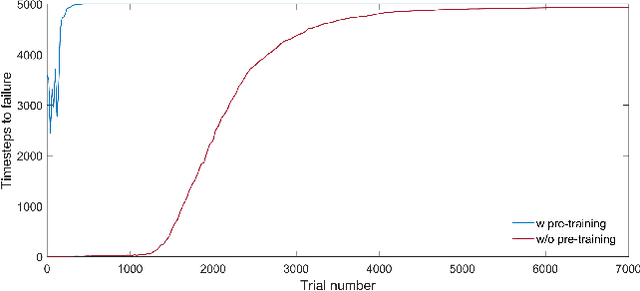

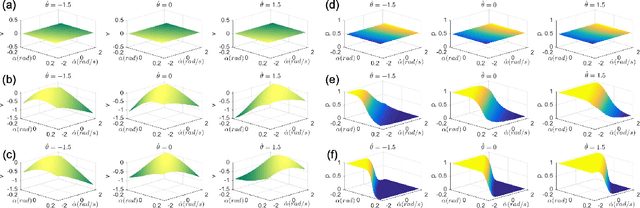

Recently, significant progress has been made in solving sophisticated problems among various domains by using reinforcement learning (RL), which allows machines or agents to learn from interactions with environments rather than explicit supervision. As the end of Moore's law seems to be imminent, emerging technologies that enable high performance neuromorphic hardware systems are attracting increasing attention. Namely, neuromorphic architectures that leverage memristors, the programmable and nonvolatile two-terminal devices, as synaptic weights in hardware neural networks, are candidates of choice to realize such highly energy-efficient and complex nervous systems. However, one of the challenges for memristive hardware with integrated learning capabilities is prohibitively large number of write cycles that might be required during learning process, and this situation is even exacerbated under RL situations. In this work we propose a memristive neuromorphic hardware implementation for the actor-critic algorithm in RL. By introducing a two-fold training procedure (i.e., ex-situ pre-training and in-situ re-training) and several training techniques, the number of weight updates can be significantly reduced and thus it will be suitable for efficient in-situ learning implementations. As a case study, we consider the task of balancing an inverted pendulum, a classical problem in both RL and control theory. We believe that this study shows the promise of using memristor-based hardware neural networks for handling complex tasks through in-situ reinforcement learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge