Memorizing All for Implicit Discourse Relation Recognition

Paper and Code

Aug 29, 2019

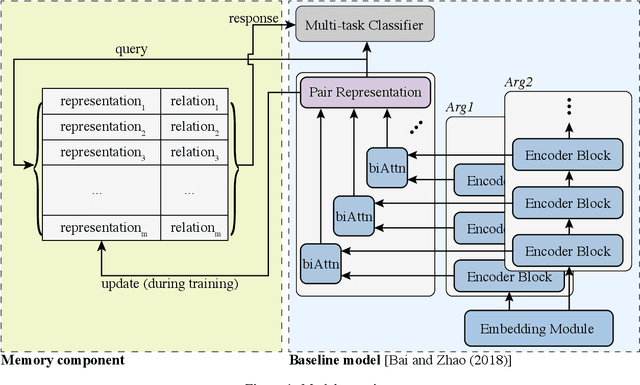

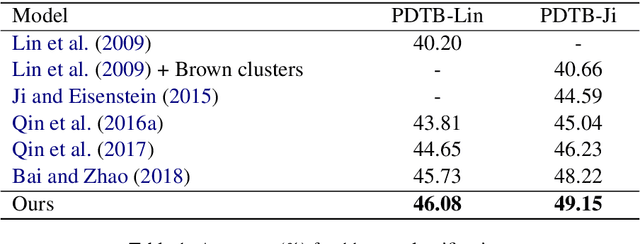

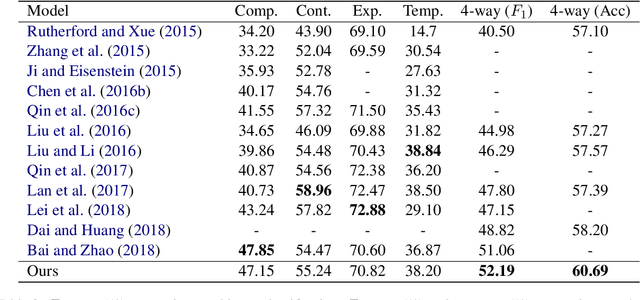

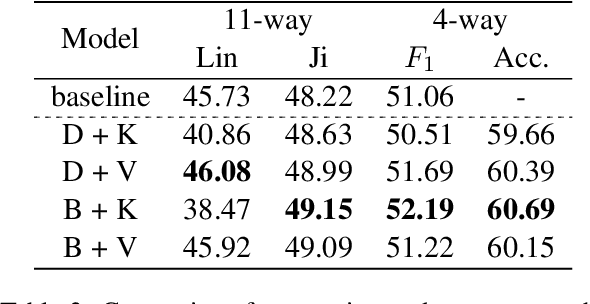

Implicit discourse relation recognition is a challenging task due to the absence of the necessary informative clue from explicit connectives. The prediction of relations requires a deep understanding of the semantic meanings of sentence pairs. As implicit discourse relation recognizer has to carefully tackle the semantic similarity of the given sentence pairs and the severe data sparsity issue exists in the meantime, it is supposed to be beneficial from mastering the entire training data. Thus in this paper, we propose a novel memory mechanism to tackle the challenges for further performance improvement. The memory mechanism is adequately memorizing information by pairing representations and discourse relations of all training instances, which right fills the slot of the data-hungry issue in the current implicit discourse relation recognizer. Our experiments show that our full model with memorizing the entire training set reaches new state-of-the-art against strong baselines, which especially for the first time exceeds the milestone of 60% accuracy in the 4-way task.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge