Measuring the Quality of Explanations: The System Causability Scale (SCS). Comparing Human and Machine Explanations

Paper and Code

Dec 19, 2019

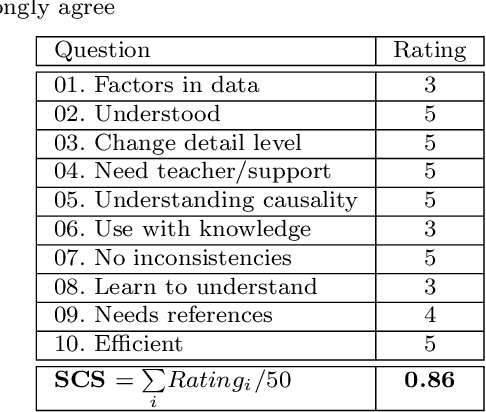

Recent success in Artificial Intelligence (AI) and Machine Learning (ML) allow problem solving automatically without any human intervention. Autonomous approaches can be very convenient. However, in certain domains, e.g., in the medical domain, it is necessary to enable a domain expert to understand, why an algorithm came up with a certain result. Consequently, the field of Explainable AI (xAI) rapidly gained interest worldwide in various domains, particularly in medicine. Explainable AI studies transparency and traceability of opaque AI/ML and there are already a huge variety of methods. For example with layer-wise relevance propagation relevant parts of inputs to, and representations in, a neural network which caused a result, can be highlighted. This is a first important step to ensure that end users, e.g., medical professionals, assume responsibility for decision making with AI/ML and of interest to professionals and regulators. Interactive ML adds the component of human expertise to AI/ML processes by enabling them to re-enact and retrace AI/ML results, e.g. let them check it for plausibility. This requires new human-AI interfaces for explainable AI. In order to build effective and efficient interactive human-AI interfaces we have to deal with the question of how to evaluate the quality of explanations given by an explainable AI system. In this paper we introduce our System Causability Scale (SCS) to measure the quality of explanations. It is based on our notion of Causability (Holzinger et al., 2019) combined with concepts adapted from a widely accepted usability scale.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge