Matrix Factorization Equals Efficient Co-occurrence Representation

Paper and Code

Aug 28, 2018

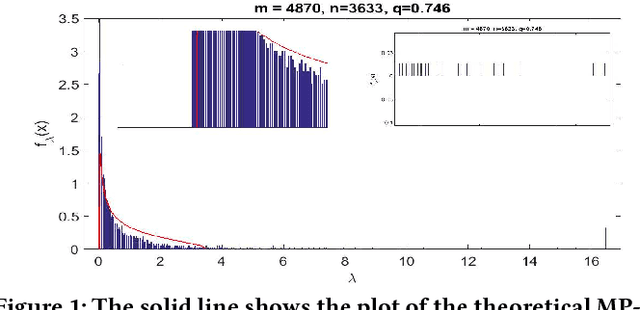

Matrix factorization is a simple and effective solution to the recommendation problem. It has been extensively employed in the industry and has attracted much attention from the academia. However, it is unclear what the low-dimensional matrices represent. We show that matrix factorization can actually be seen as simultaneously calculating the eigenvectors of the user-user and item-item sample co-occurrence matrices. We then use insights from random matrix theory (RMT) to show that picking the top eigenvectors corresponds to removing sampling noise from user/item co-occurrence matrices. Therefore, the low-dimension matrices represent a reduced noise user and item co-occurrence space. We also analyze the structure of the top eigenvector and show that it corresponds to global effects and removing it results in less popular items being recommended. This increases the diversity of the items recommended without affecting the accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge