Massive Autonomous UAV Path Planning: A Neural Network Based Mean-Field Game Theoretic Approach

Paper and Code

May 10, 2019

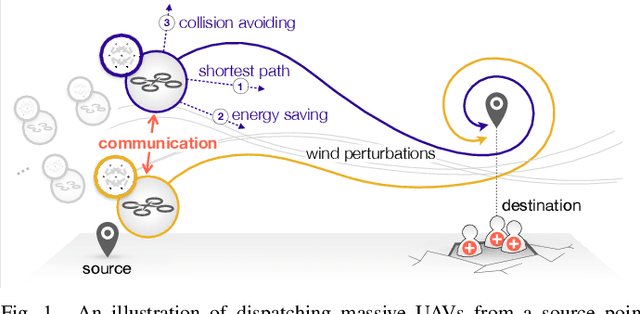

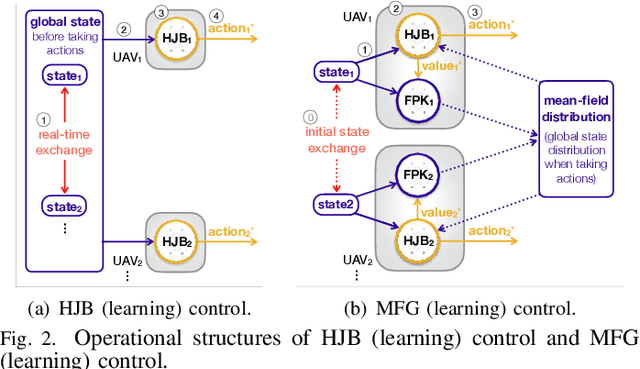

This paper investigates the autonomous control of massive unmanned aerial vehicles (UAVs) for mission-critical applications (e.g., dispatching many UAVs from a source to a destination for firefighting). Achieving their fast travel and low motion energy without inter-UAV collision under wind perturbation is a daunting control task, which incurs huge communication energy for exchanging UAV states in real time. We tackle this problem by exploiting a mean-field game (MFG) theoretic control method that requires the UAV state exchanges only once at the initial source. Afterwards, each UAV can control its acceleration by locally solving two partial differential equations (PDEs), known as the Hamilton-Jacobi-Bellman (HJB) and Fokker-Planck-Kolmogorov (FPK) equations. This approach, however, brings about huge computation energy for solving the PDEs, particularly under multi-dimensional UAV states. We address this issue by utilizing a machine learning (ML) method where two separate ML models approximate the solutions of the HJB and FPK equations. These ML models are trained and exploited using an online gradient descent method with low computational complexity. Numerical evaluations validate that the proposed ML aided MFG theoretic algorithm, referred to as MFG learning control, is effective in collision avoidance with low communication energy and acceptable computation energy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge