Map Feature Perception Metric for Map Generation Quality Assessment and Loss Optimization

Paper and Code

Mar 30, 2025

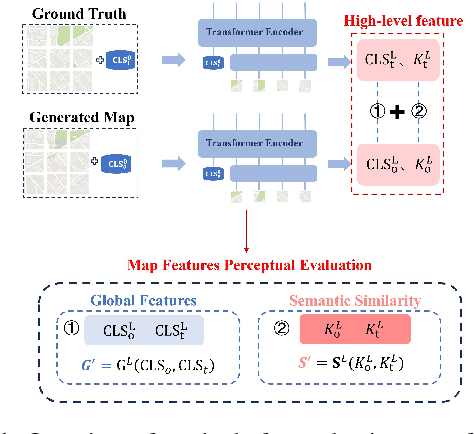

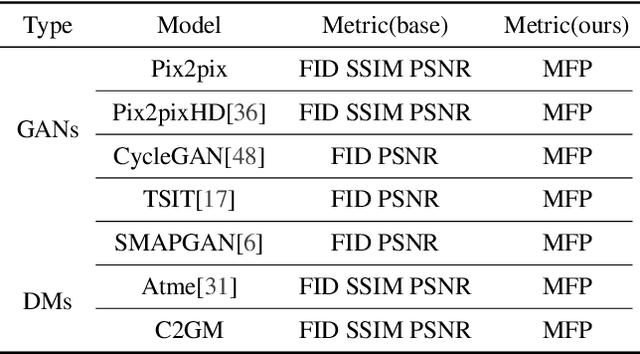

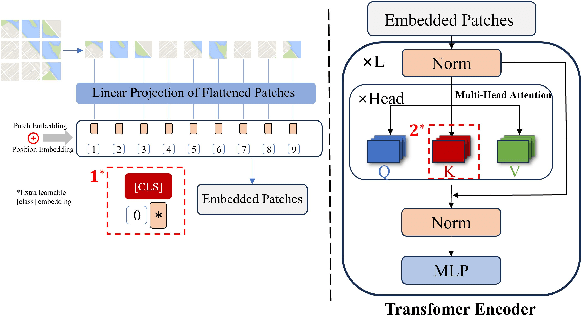

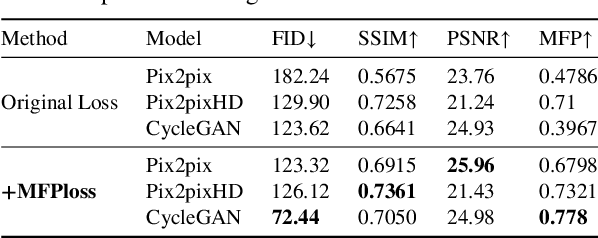

In intelligent cartographic generation tasks empowered by generative models, the authenticity of synthesized maps constitutes a critical determinant. Concurrently, the selection of appropriate evaluation metrics to quantify map authenticity emerges as a pivotal research challenge. Current methodologies predominantly adopt computer vision-based image assessment metrics to compute discrepancies between generated and reference maps. However, conventional visual similarity metrics-including L1, L2, SSIM, and FID-primarily operate at pixel-level comparisons, inadequately capturing cartographic global features and spatial correlations, consequently inducing semantic-structural artifacts in generated outputs. This study introduces a novel Map Feature Perception Metric designed to evaluate global characteristics and spatial congruence between synthesized and target maps. Diverging from pixel-wise metrics, our approach extracts elemental-level deep features that comprehensively encode cartographic structural integrity and topological relationships. Experimental validation demonstrates MFP's superior capability in evaluating cartographic semantic features, with classification-enhanced implementations outperforming conventional loss functions across diverse generative frameworks. When employed as optimization objectives, our metric achieves performance gains ranging from 2% to 50% across multiple benchmarks compared to traditional L1, L2, and SSIM baselines. This investigation concludes that explicit consideration of cartographic global attributes and spatial coherence substantially enhances generative model optimization, thereby significantly improving the geographical plausibility of synthesized maps.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge