MAMRL: Exploiting Multi-agent Meta Reinforcement Learning in WAN Traffic Engineering

Paper and Code

Nov 30, 2021

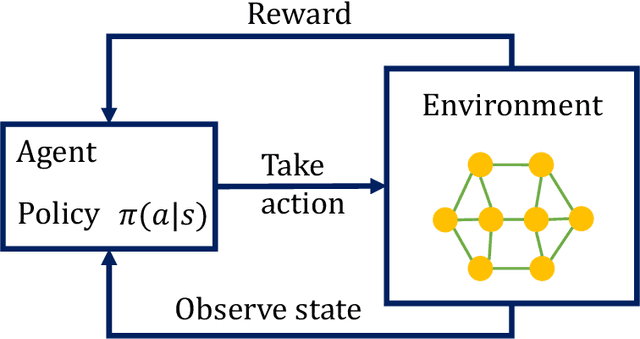

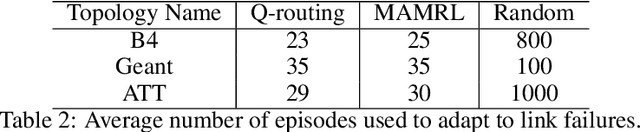

Traffic optimization challenges, such as load balancing, flow scheduling, and improving packet delivery time, are difficult online decision-making problems in wide area networks (WAN). Complex heuristics are needed for instance to find optimal paths that improve packet delivery time and minimize interruptions which may be caused by link failures or congestion. The recent success of reinforcement learning (RL) algorithms can provide useful solutions to build better robust systems that learn from experience in model-free settings. In this work, we consider a path optimization problem, specifically for packet routing, in large complex networks. We develop and evaluate a model-free approach, applying multi-agent meta reinforcement learning (MAMRL) that can determine the next-hop of each packet to get it delivered to its destination with minimum time overall. Specifically, we propose to leverage and compare deep policy optimization RL algorithms for enabling distributed model-free control in communication networks and present a novel meta-learning-based framework, MAMRL, for enabling quick adaptation to topology changes. To evaluate the proposed framework, we simulate with various WAN topologies. Our extensive packet-level simulation results show that compared to classical shortest path and traditional reinforcement learning approaches, MAMRL significantly reduces the average packet delivery time even when network demand increases; and compared to a non-meta deep policy optimization algorithm, our results show the reduction of packet loss in much fewer episodes when link failures occur while offering comparable average packet delivery time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge