Maintaining a Reliable World Model using Action-aware Perceptual Anchoring

Paper and Code

Jul 07, 2021

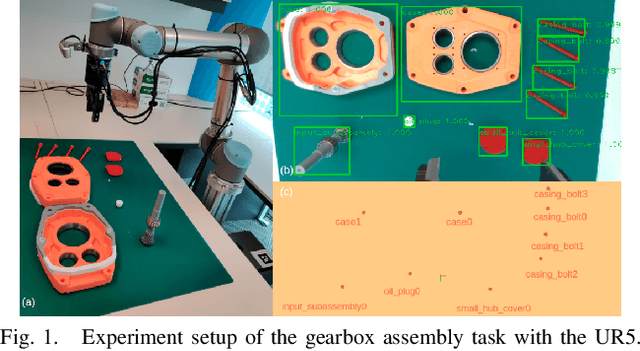

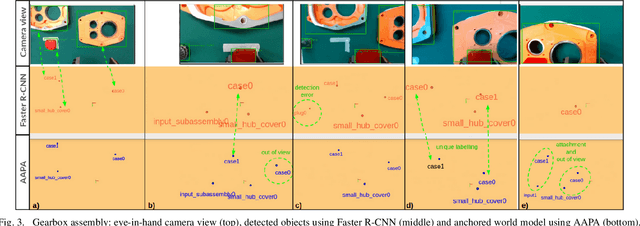

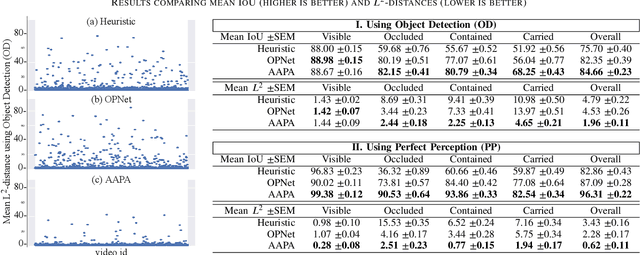

Reliable perception is essential for robots that interact with the world. But sensors alone are often insufficient to provide this capability, and they are prone to errors due to various conditions in the environment. Furthermore, there is a need for robots to maintain a model of its surroundings even when objects go out of view and are no longer visible. This requires anchoring perceptual information onto symbols that represent the objects in the environment. In this paper, we present a model for action-aware perceptual anchoring that enables robots to track objects in a persistent manner. Our rule-based approach considers inductive biases to perform high-level reasoning over the results from low-level object detection, and it improves the robot's perceptual capability for complex tasks. We evaluate our model against existing baseline models for object permanence and show that it outperforms these on a snitch localisation task using a dataset of 1,371 videos. We also integrate our action-aware perceptual anchoring in the context of a cognitive architecture and demonstrate its benefits in a realistic gearbox assembly task on a Universal Robot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge