Magnitude-Phase Dual-Path Speech Enhancement Network based on Self-Supervised Embedding and Perceptual Contrast Stretch Boosting

Paper and Code

Mar 27, 2025

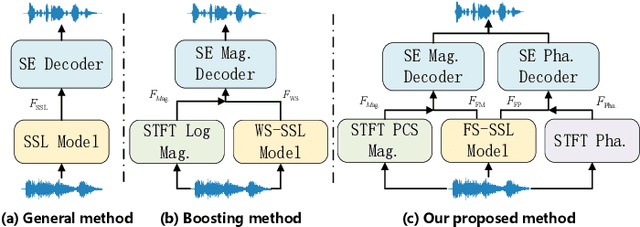

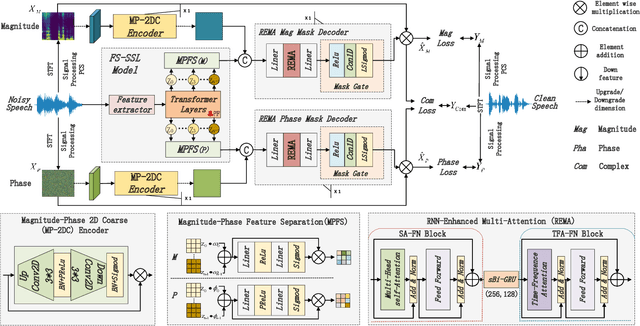

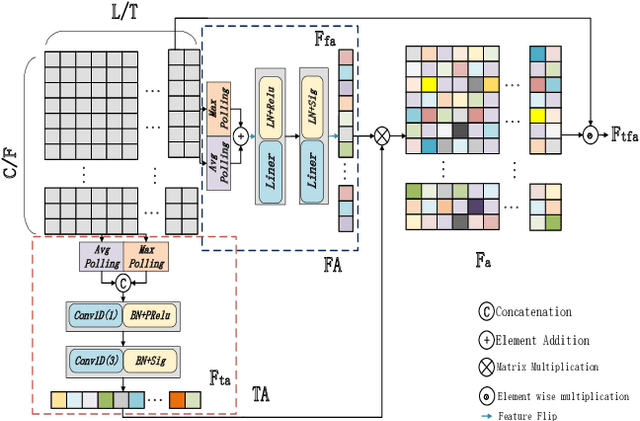

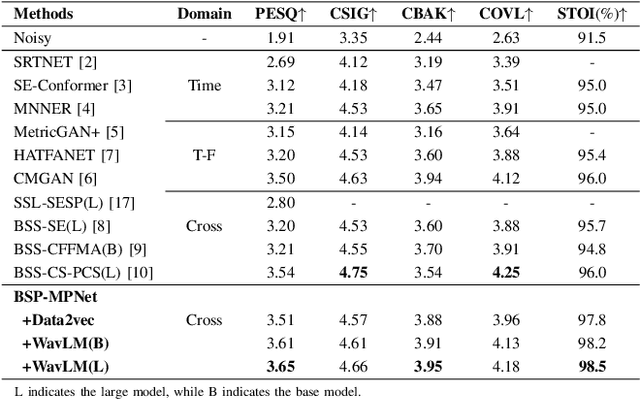

Speech self-supervised learning (SSL) has made great progress in various speech processing tasks, but there is still room for improvement in speech enhancement (SE). This paper presents BSP-MPNet, a dual-path framework that combines self-supervised features with magnitude-phase information for SE. The approach starts by applying the perceptual contrast stretching (PCS) algorithm to enhance the magnitude-phase spectrum. A magnitude-phase 2D coarse (MP-2DC) encoder then extracts coarse features from the enhanced spectrum. Next, a feature-separating self-supervised learning (FS-SSL) model generates self-supervised embeddings for the magnitude and phase components separately. These embeddings are fused to create cross-domain feature representations. Finally, two parallel RNN-enhanced multi-attention (REMA) mask decoders refine the features, apply them to the mask, and reconstruct the speech signal. We evaluate BSP-MPNet on the VoiceBank+DEMAND and WHAMR! datasets. Experimental results show that BSP-MPNet outperforms existing methods under various noise conditions, providing new directions for self-supervised speech enhancement research. The implementation of the BSP-MPNet code is available online\footnote[2]{https://github.com/AlimMat/BSP-MPNet. \label{s1}}

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge