LW-FedSSL: Resource-efficient Layer-wise Federated Self-supervised Learning

Paper and Code

Jan 22, 2024

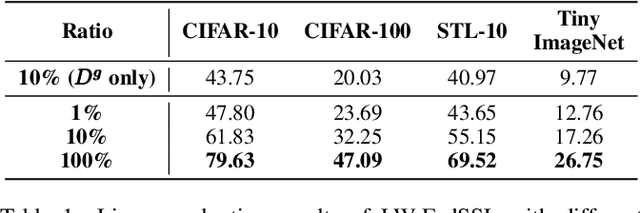

Many recent studies integrate federated learning (FL) with self-supervised learning (SSL) to take advantage of raw training data distributed across edge devices. However, edge devices often struggle with high computation and communication costs imposed by SSL and FL algorithms. To tackle this hindrance, we propose LW-FedSSL, a layer-wise federated self-supervised learning approach that allows edge devices to incrementally train one layer of the model at a time. LW-FedSSL comprises server-side calibration and representation alignment mechanisms to maintain comparable performance with end-to-end FedSSL while significantly lowering clients' resource requirements. The server-side calibration mechanism takes advantage of the resource-rich server in an FL environment to assist in global model training. Meanwhile, the representation alignment mechanism encourages closeness between representations of FL local models and those of the global model. Our experiments show that LW-FedSSL has a $3.3 \times$ lower memory requirement and a $3.2 \times$ cheaper communication cost than its end-to-end counterpart. We also explore a progressive training strategy called Prog-FedSSL that outperforms end-to-end training with a similar memory requirement and a $1.8 \times$ cheaper communication cost.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge