Locate, Segment and Match: A Pipeline for Object Matching and Registration

Paper and Code

May 01, 2018

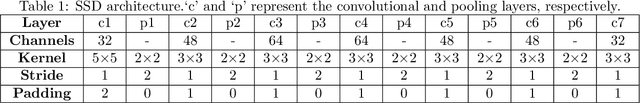

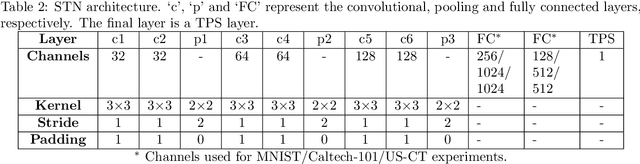

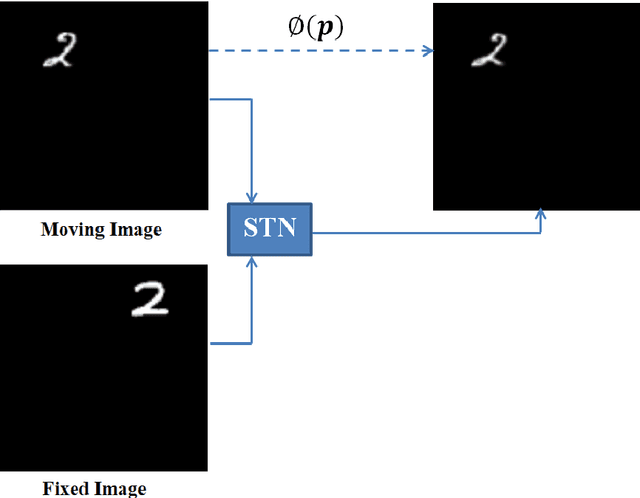

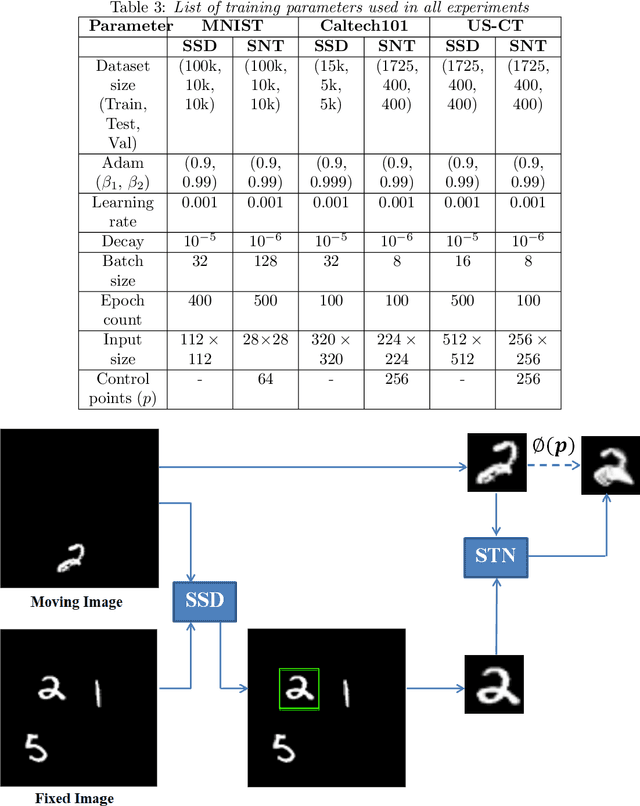

Image registration requires simultaneous processing of multiple images to match the keypoints or landmarks of the contained objects. These images often come from different modalities for example CT and Ultrasound (US), and pose the challenge of establishing one to one correspondence. In this work, a novel pipeline of convolutional neural networks is developed to perform the desired registration. The complete objective is divided into three parts: localization of the object of interest, segmentation and matching transformation. Most of the existing approaches skip the localization step and are prone to fail in general scenarios. We overcome this challenge through detection which also establishes initial correspondence between the images. A modified version of single shot multibox detector is used for this purpose. The detected region is cropped and subsequently segmented to generate a mask corresponding to the desired object. The mask is used by a spatial transformer network employing thin plate spline deformation to perform the desired registration. Initial experiments on MNIST and Caltech-101 datasets show that the proposed model is able to accurately match the segmented images. The proposed framework is extended to the registration of CT and US images, which is free from any data specific assumptions and has better generalization capability as compared to the existing rule based/classical approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge